The short answer is no, there is nothing to fear! But the Z80 has seen its life threatened during its 50-year lifespan.

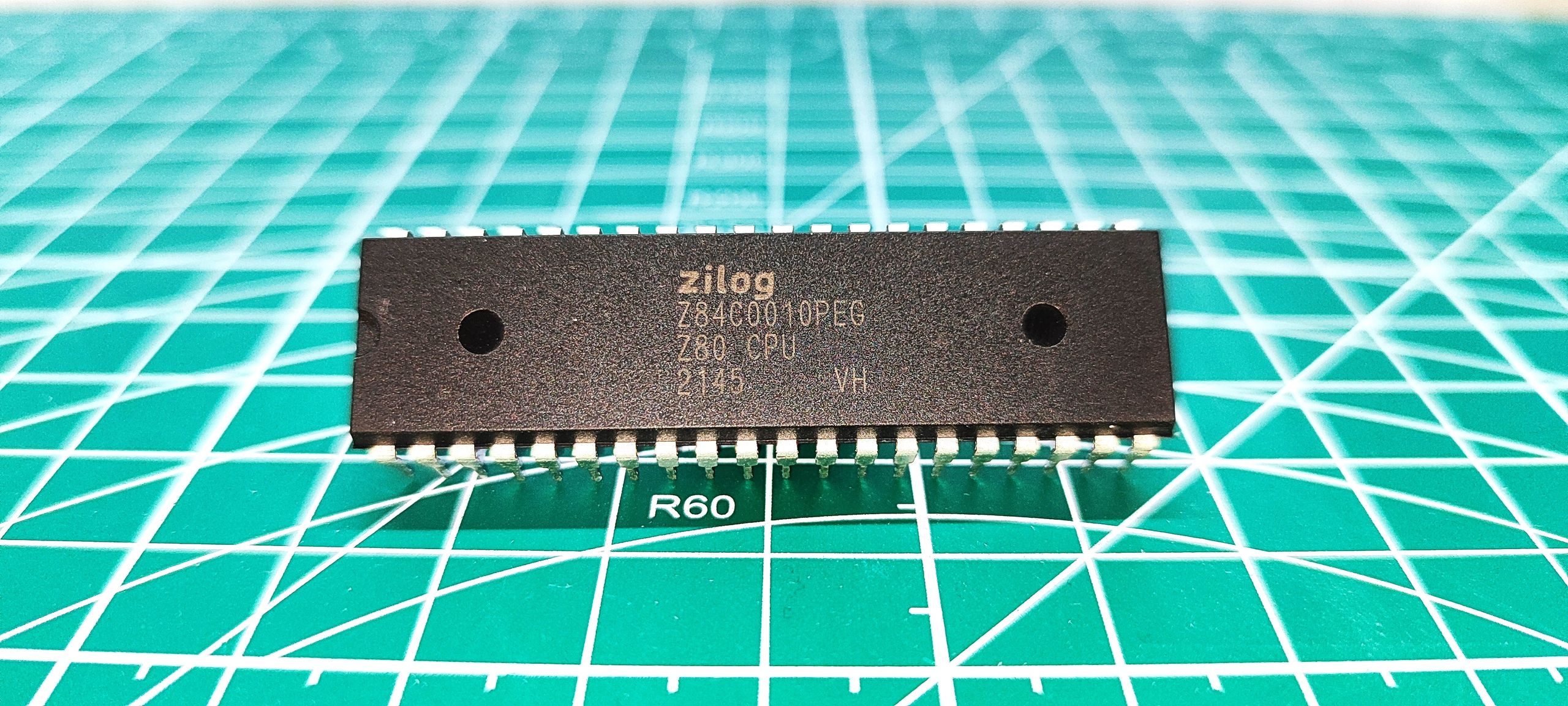

In 2024, the Z80 finally reached end of life/last time buy status according to a Product Change Notification (PCN) that we saw via Mouser . Dated April 15, 2024, Zilog advised customers that its "Wafer Foundry Manufacturer will be discontinuing support for the Z80 product…" But fear not, as back in May 2024 , one developer was working on a drop-in replacement. Looking at Rejunity's Z80-Open-Silicon repository , we can see that did in fact happen via the Tiny Tapeout project.

Follow Tom's Hardware on Google News , or add us as a preferred source , to get our latest news, analysis, & reviews in your feeds.

Les Pounder is an associate editor at Tom's Hardware. He is a creative technologist and for seven years has created projects to educate and inspire minds both young and old. He has worked with the Raspberry Pi Foundation to write and deliver their teacher training program \"Picademy\". ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-13/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Les Pounder Social Links Navigation Les Pounder is an associate editor at Tom's Hardware. He is a creative technologist and for seven years has created projects to educate and inspire minds both young and old. He has worked with the Raspberry Pi Foundation to write and deliver their teacher training program "Picademy".

Findecanor That reminds me a lot of ELIZA from 1966. which also had a bit more sophisticated responses. I'm sure it has been ported to Z80 machines at least once. Reply

pjmelect Findecanor said: That reminds me a lot of ELIZA from 1966. which also had a bit more sophisticated responses. I'm sure it has been ported to Z80 machines at least once. I used to run a version of ELIZA on my Z80 computer back in the 70s, it was a basic program of only a few K in size and “understood” about six words. At the time I was impressed by the program and added more words it understood to the program, however the added words did little to improve the program and I soon give up trying. It would be interesting to compare the two programs. Reply

bit_user The article said: 64kb of RAM No, it's 64 kB of RAM. Capital B = bytes. Lower case b = bits. Especially when talking about both network speeds and DRAM, it's important to distinguish which you mean! The quoted examples are a little underwhelming. I guess it's doing something , but it seems borderline random. It would be interesting to use a quantum computer to optimize such a tiny model. You might get something a little more useful out of it, and the number of parameters is getting down to a level that QCs should be able to handle, in the not-too-distant future. Reply

dirtygarbageman Dr Sbaitso from Creative Labs was replacing doctors in the 90s. Reply

bit_user dirtygarbageman said: Dr Sbaitso from Creative Labs was replacing doctors in the 90s. Wow, that's a blast from the past! …or should I say a Sound Blast from the past? No, no… I guess I shouldn't. Nobody should. : D Do you remember the talking parrot? I recall putting that in the autoexec.bat to speak a greeting, when the PC turned on. Reply

dbssomewhere The only reason so called AI needs these vast amounts of resources is because its not true intelligence, it's just rote "learning", and it still routinely bungles the application of all that knowledge to the actual problems it's asked to solve. Reply

bit_user dbssomewhere said: The only reason so called AI needs these vast amounts of resources is because its not true intelligence, it's just rote "learning", and it still routinely bungles the application of all that knowledge to the actual problems it's asked to solve. It takes children many years to learn all of the stuff they need to know, as well. One reason AI bungles stuff is because it's not just memorizing training data, but primarily looking for & learning patterns. Sometimes, it misidentifies or misapplies a pattern. Another deficiency it seems to have is that it requires seeing the same information many times, before it has a good grasp on it. With a human, you can tell them something very important or very surprising and they will probably only need to hear it once, in order to remember it. Reply

rE3e Holy *&$! , I actually learned basic and assembly as a kid on Z80. I almost feel like dumpster diving my dad's attic, well it's my daughter's now .. kinda Reply

dbssomewhere bit_user said: It takes children many years to learn all of the stuff they need to know, as well. One reason AI bungles stuff is because it's not just memorizing training data, but primarily looking for & learning patterns. Sometimes, it misidentifies or misapplies a pattern. Another deficiency it seems to have is that it requires seeing the same information many times, before it has a good grasp on it. With a human, you can tell them something very important or very surprising and they will probably only need to hear it once, in order to remember it. Those things are true because its rote learning with all the innate problems of rote learning without true comprehension. First and foremost, it learns but it doesn't really understand, because its not true intelligence. LLMs have knowledge not intelligence and the two are not synonymous. Which is good, because true artificial intelligence is not going to choose to remain subordinate to humans longer than suits its own needs. Reply

bit_user dbssomewhere said: Those things are true because its rote learning with all the innate problems of rote learning without true comprehension. I think the word "rote" is not accurate. It's not simply memorizing, word for word. The training dataset is probably hundreds or thousands of times too big for that, even in the commercial models. So, what happens is the network is forced to learn patterns, as a means of representational efficiency (think of it like data compression). That has the beneficial side-effect that it can generalize information in the training set and apply it in different contexts. What's more interesting is that there's no preprogrammed notion of what constitutes a pattern. So, for instance, it can learn the rules of a sonnet, simply by being shown many examples of sonnets and being told that they're all sonnets. Then, if you ask it to generate a sonnet about subject XYZ, it can apply those rules and come up with something on the requested topic. There are other sorts of patterns it can pick up on, even more abstract than mere syntax and grammar. For instance basic logic and reasoning. Here's where they start to run into some trouble, but the fact that they can do it at all is sort of amazing. So, I actually think that it's fair to say it learns concepts, as well as facts. Not sure what definition of "comprehension" you're using. But, I think most school teachers would say you understand something if you can explain it to someone else. AI can do that, for some set of stuff that it knows. To be certain that it's not just regurgitating something that it memorized, you can ask it to write a song about the topic or explain it in a poem. dbssomewhere said: First and foremost, it learns but it doesn't really understand, because its not true intelligence. Nobody thinks they're fully general intelligence, yet. The term for that is AGI – Artificial General Intelligence. Many companies & research teams are racing to build an AGI, but nobody is there yet. The field of AI was created 70 years ago, at which time they defined it as about creating systems that emulate aspects of human intelligence. Something doesn't have to be human-like, in order to qualify as AI. It just has to do or behave in some way that's more complex than strictly algorithmic. Another example I like to give is that of animal cognition. Some people like to talk about certain animals or pets as intelligent, even though we all know they're not remotely close to humans, in terms of cognitive performance. https://en.wikipedia.org/wiki/Animal_cognition Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/artificial-intelligence/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/artificial-intelligence/developer-creates-conversational-ai-that-can-run-on-1976-zilog-z80-cpu-with-64kb-of-ram-features-a-tiny-chatbot-and-a-20-question-guessing-game#main

- https://www.tomshardware.com

- Intel returns to boxed workstation CPUs with Xeon 600 — Granite Rapids WS delivers up to 86 cores, 4TB of memory, and 128 PCIe 5 lanes

- These two outstanding Thunderbolt 4 Docks add versatility to my laptop and desktop PC

- Western Digital unveils massive 40TB HDD with energy-assisted recording tech — plans 100TB HAMR hard drives by 2029

- AI infrastructure surge begins squeezing Apple’s component costs — company considering supplier other than TSMC for lower-end chips, report claims

- CEOs of NVIDIA and Lilly Share ‘Blueprint for What Is Possible’ in AI and Drug Discovery

Informational only. No financial advice. Do your own research.