Every chip in the stack has a defined job, from training trillion-parameter models to caching inference tokens on NVMe storage.

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

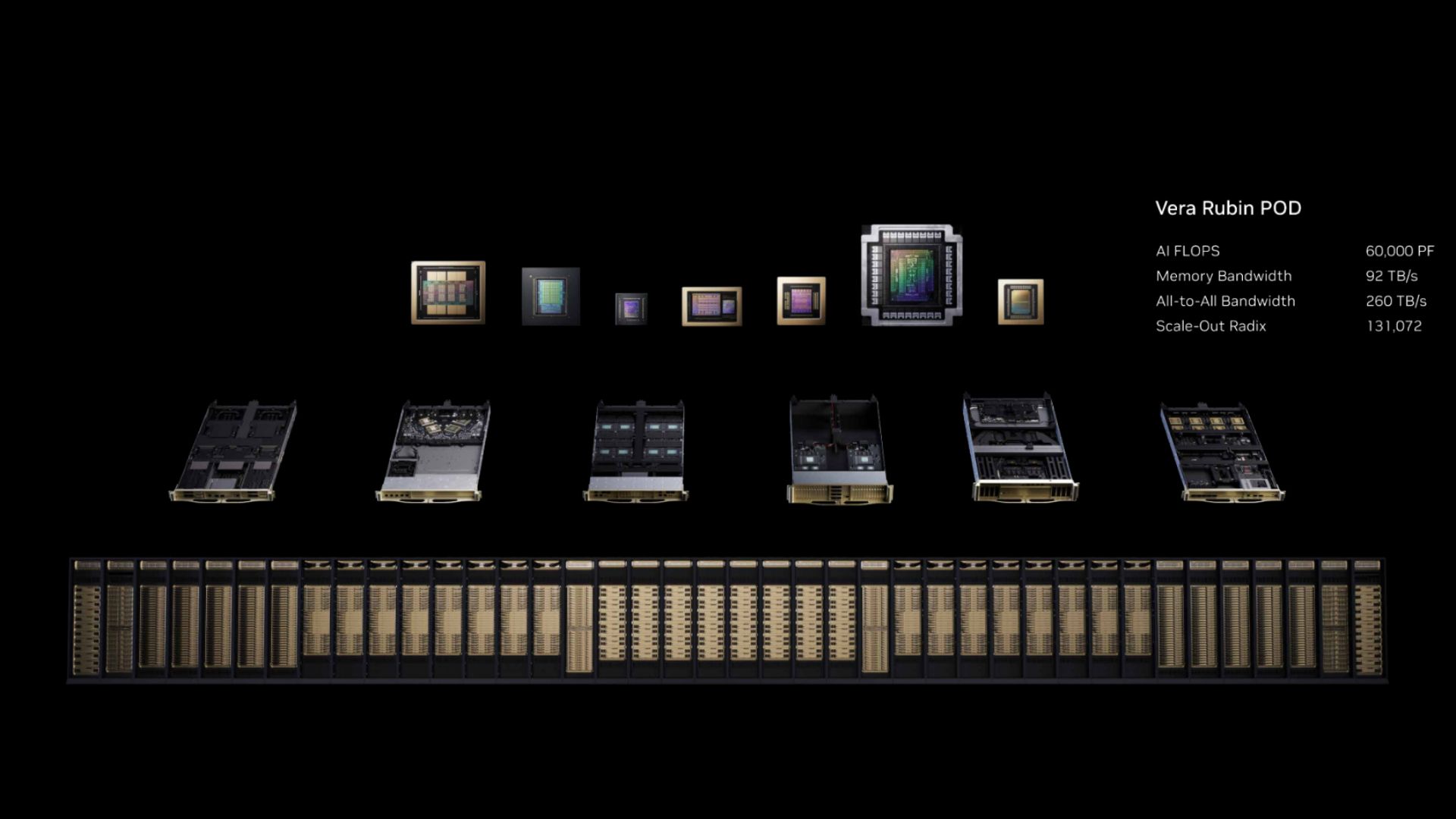

Nvidia announced seven chips in full production at GTC 2026 on Monday, composing the Vera Rubin platform that the company intends to ship in the second half of this year.

Rather than a single product launch, several announcements covered the full silicon stack required to build what Nvidia now calls an AI factory : GPUs, CPUs, a dedicated inference accelerator, networking ASICs, a data processing unit, and an Ethernet switch. All seven are designed to operate as a single co-designed system across five rack types, scaling from individual racks to 40-rack PODs delivering 60 exaflops of compute.

The so-called AI factory is a massive shift in how Nvidia packages and sells its hardware, where the unit of compute is no longer a GPU or even a server; it’s the rack, and increasingly, the POD. Each of the seven chips fills a specific architectural role — and understanding what each does is the fastest route to understanding what Vera Rubin fundamentally is.

You may like Nvidia launches Vera Rubin NVL72 AI supercomputer at CES Nvidia unveils details of new 88-core Vera CPUs positioned to compete with AMD and Intel Nvidia's focus on rack-scale AI systems is a portent for the year to come The compute layer: Rubin GPU, Vera CPU, and Groq 3

Three chips handle the core compute workload, each optimized for a different phase of the AI pipeline.

The Rubin GPU is the training and inference workhorse built on TSMC's 3nm process . Each GPU uses a dual-die design packing 336 billion transistors, carries 288 GB of HBM4 memory with 22 TB/s of bandwidth, and delivers 50 PFLOPS of inference compute and 35 PFLOPS of training compute in the NVFP4 format. Those figures represent 5 and 3.5 times improvements over Blackwell, respectively.

In the flagship Vera Rubin NVL72 rack, 72 Rubin GPUs connect via NVLink 6 to behave as a single accelerator. Nvidia claims that the NVL72 can train Mixture-of-Experts models with one quarter the GPU count required by Blackwell, and cut inference token costs by 10 times.

The Vera CPU, meanwhile, is Nvidia's first data center CPU built from the ground up. It uses 88 custom Arm-based Olympus cores with Spatial Multithreading for 176 threads, up to 1.5TB of SOCAMM LPDDR5X memory, and 1.2 TB/s of memory bandwidth. Vera connects to Rubin GPUs via NVLink-C2C at 1.8 TB/s of coherent bandwidth, which is seven times faster than PCIe Gen 6. Its role in the rack is orchestration: scheduling workloads, routing KV cache data, managing context, and running the control plane for agentic AI workflows. It also handles reinforcement learning environments and CPU-native workloads.

The Groq 3 LPU — purpose-built for low-latency decode-phase inference — is the most unexpected addition to the platform, and a direct product of Nvidia's $20 billion acquisition of Groq in December. Where Rubin GPUs offer massive memory capacity through HBM4, the Groq 3 trades capacity for bandwidth: Each LPU can carry roughly 500MB of stacked SRAM and delivers approximately 80 TB/s of bandwidth per chip. The Groq 3 LPX rack houses 256 LPUs with about 128GB of aggregate on-chip SRAM and 640 TB/s of scale-up bandwidth.

Rubin GPUs handle the compute-heavy prefill phase of inference, processing long input contexts, with the Groq 3 LPUs stepping in to handle the decode phase, generating output tokens at low latency. Nvidia claims the combination delivers 35 times higher inference throughput per megawatt and 10 times more revenue opportunity for trillion-parameter models, compared with running both phases on GPUs alone.

As for moving data between chips at rack scale and between racks at cluster scale, Nvidia has architected this via three dedicated networking ASICs.

Nvidia Groq 3 LPU and Groq LPX racks join Rubin platform at GTC — SRAM-packed accelerator boosts 'every layer of the AI model on every token'

Nvidia CEO confirms Vera Rubin NVL72 is now in production

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/artificial-intelligence/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/artificial-intelligence/nvidias-seven-chip-vera-rubin-platforms-turns-the-data-center-into-an-ai-factory#main

- https://www.tomshardware.com

- Micron enters high-volume production of HBM4 for Nvidia Vera Rubin – 2.3x bandwidth improvement and 20% boost in power efficiency

- Flash sale at Autodesk slashes up to 20% off the company's most popular products — just two days left to save

- Go retro with this Commodore 64 Mini console for $69 — revamped 80s home computing with 25 games now 42% off

- Seagate FireCuda X1070 2TB SSD review: Entry-level hardware meets premium support

- As Open Models Spark AI Boom, NVIDIA Jetson Brings It to Life at the Edge

Informational only. No financial advice. Do your own research.