When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

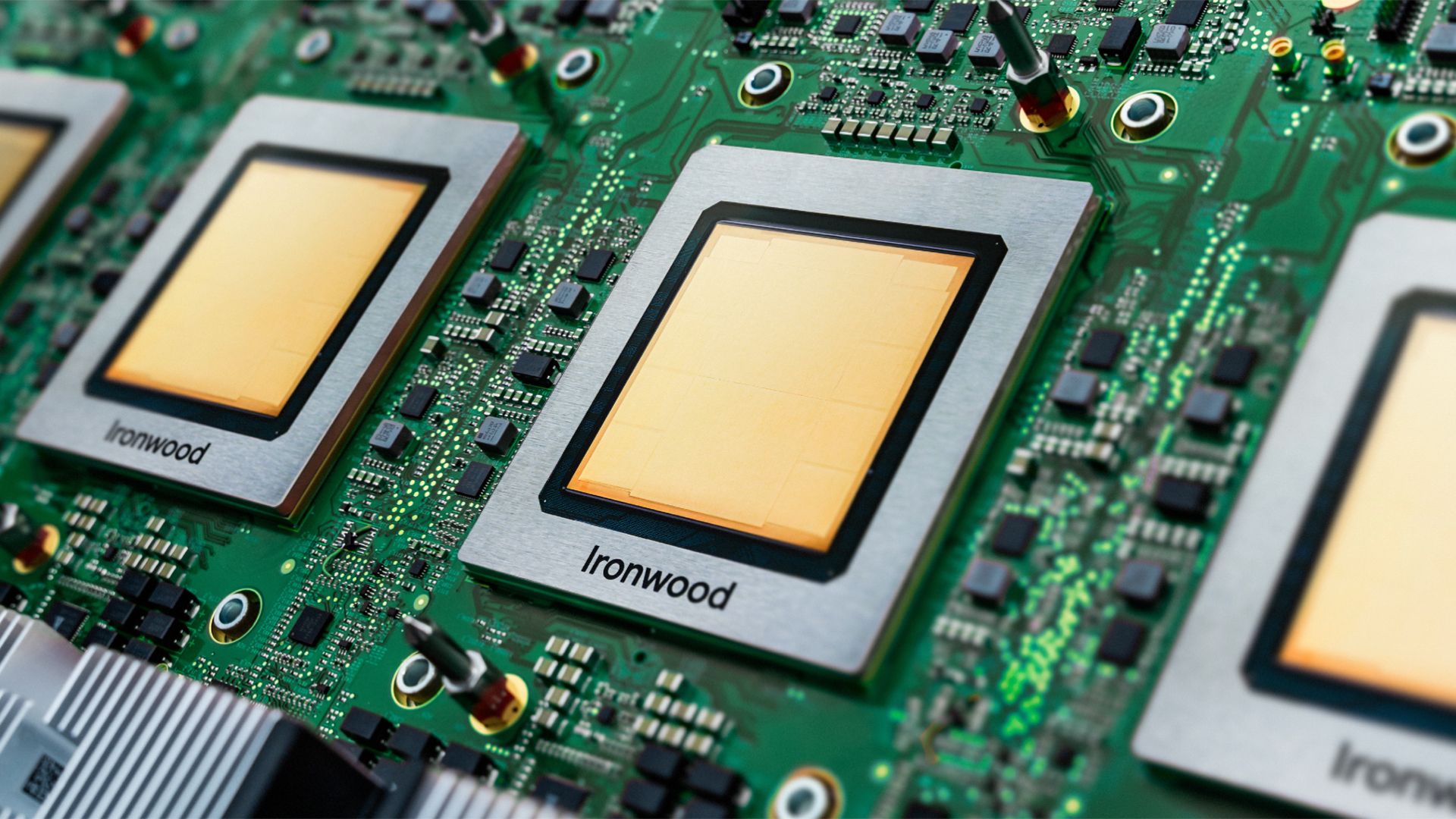

(Image credit: Google) Today, Google Cloud introduced new AI-oriented instances, powered by its own Axion CPUs and Ironwood TPUs. The new instances are aimed at both training and low-latency inference of large-scale AI models, the key feature of these new instances is efficient scaling of AI models, enabled by a very large scale-up world size of Google's Ironwood-based systems.

Ironwood is Google's 7 th Generation tensor processing unit (TPU), which delivers 4,614 FP8 TFLOPS of performance and is equipped with 192 GB of HBM3E memory, offering a bandwidth of up to 7.37 TB/s. Ironwood pods scale up to 9,216 AI accelerators, delivering a total of 42.5 FP8 ExaFLOPS for training and inference, which by far exceeds the FP8 capabilities of Nvidia's GB300 NVL72 system that stands at 0.36 ExaFLOPS. The pod is interconnected using a proprietary 9.6 Tb/s Inter-Chip Interconnect network, and carries roughly 1.77 PB of HBM3E memory in total, once again exceeding what Nvidia's competing platform can offer.

Ironwood pods — based on Axion CPUs and Ironwood TPUs — can be joined into clusters running hundreds of thousands of TPUs, which form part of Google's adequately dubbed AI Hypercomputer. This is an integrated supercomputing platform uniting compute, storage, and networking under one management layer. To boost the reliability of both ultra-large pods and the AI Hypercomputer, Google uses its reconfigurable fabric, named Optical Circuit Switching, which instantly routes around any hardware interruption to sustain continuous operation.

IDC data credits the AI Hypercomputer model with an average 353% three-year ROI, 28% lower IT spending, and 55% higher operational efficiency for enterprise customers.

Several companies are already adopting Google's Ironwood-based platform. Anthropic plans to use as many as one million TPUs to operate and expand its Claude model family, citing major cost-to-performance gains. Lightricks has also begun deploying Ironwood to train and serve its LTX-2 multimodal system.

Although AI accelerators like Google's Ironwood tend to steal all the thunder in the AI era of computing, CPUs are still crucially important for application logic and service hosting as well as running some of AI workloads, such as data ingestion. So, along with its 7 th Generation TPUs, Google is also deploying its first Armv9-based general-purpose processors, named Axion .

Google has not published the full die specifications for its Axion CPUs: there are no confirmed core count per die (beyond up to 96 vCPUs and up to 768 GB of DDR5 memory for C4A Metal instance), no disclosed clock speeds, and no process node publicly detailed for the part. What we do know is that Axion is built around the Arm Neoverse v2 platform, and is designed to offer up to 50% greater performance and up to 60% higher energy efficiency compared to modern x86 CPUs, as well as 30% higher performance than 'the fastest general-purpose Arm-based instances available in the cloud today. There are reports that the CPU offers 2 MB of private L2 cache per core, 80 MB of L3 cache, supports DDR5-5600 MT/s memory, and Uniform Memory Access (UMA) for nodes.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/artificial-intelligence/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/artificial-intelligence/google-deploys-new-axion-cpus-and-seventh-gen-ironwood-tpu-training-and-inferencing-pods-beat-nvidia-gb300-and-shape-ai-hypercomputer-model#main

- https://www.tomshardware.com

- MSI MPG271QR X50 27-inch 500 Hz QHD QD-OLED gaming monitor review: Fast and colorful with premium cred

- This £139 AOC gaming monitor deal is an absolute steal for 1440p gaming with a 240Hz refresh rate — 30% saving brings it to lowest-ever price

- Join the Resistance: ‘ARC Raiders’ Launches in the Cloud

- Chinese provinces offer steep power discounts to AI companies using China-made chips — country continues its aggressive push towards AI independence and homegro

- Nvidia unexpectedly replaces a damaged RTX 5090 GPU despite user blunder — $1,999 flagship GPU escapes paperweight status against all odds

Informational only. No financial advice. Do your own research.