Follow Tom's Hardware on Google News , or add us as a preferred source , to get our latest news, analysis, & reviews in your feeds.

Anton Shilov is a contributing writer at Tom\u2019s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends. ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-18/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Anton Shilov Social Links Navigation Contributing Writer Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

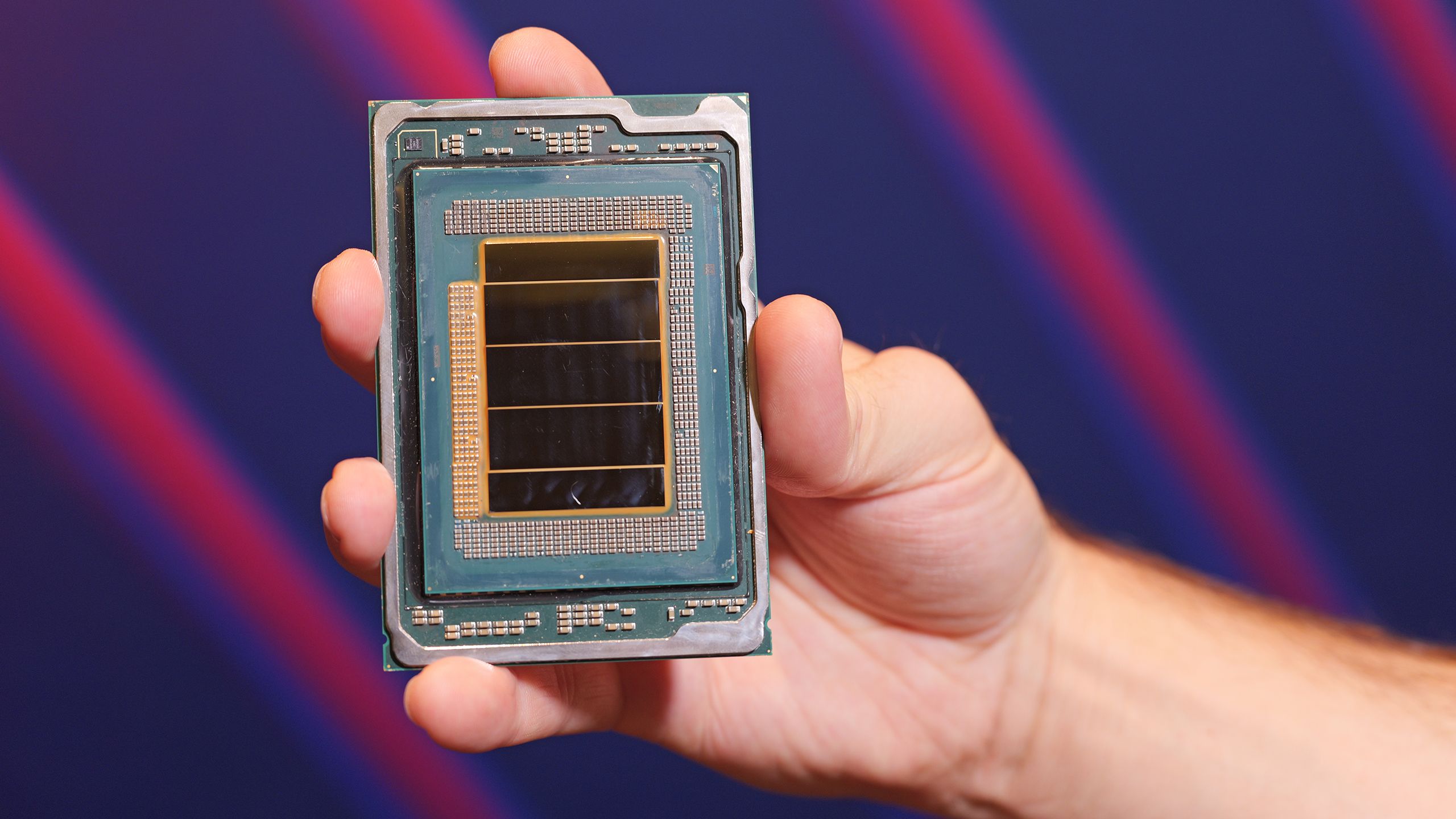

bit_user Last things first: The article said: Systems based on Intel's Xeon 6+ processors will be available later this year. Okay, so not a launch, but rather just a formal announcement. The article said: From a cache hierarchy standpoint, the design groups cores into four-core blocks that share approximately 4 MB of L2 cache per block. As a result, the aggregate last-level cache across the full package surpasses 1 GB "as a result" ?? No, 4 MB per 4 cores = 1 MB per core. So, the total amount of L2 cache is just 288 MB. I have seen where it has 1152 of last level cache, but the way it's written makes it sound like you're just talking about L2. The article said: Intel aims its Xeon 6+ 'Clearwater Forest' processors primarily for telecom, cloud, and edge AI workloads as they feature Advanced Matrix Extensions (AMX) Wait, this has AMX?? Or, is it just the Diamond Rapids members of the family that would have that? I'd be rather surprised if Clearwater Forest has AMX, since that adds a non-trivial amount of die area per core. Also, what about AVX-512? Reply

bit_user So, reviewing what they've previously disclosed about, I see that mentions of AMX, AVX-512, and AVX10 are conspicuously absent. https://www.tomshardware.com/desktops/servers/intel-reveals-288-core-xeon Another downside is that it's still PCIe 5.0-based (and CXL 2.0). By contrast, AMD's Venice, probably launching around the same time, is moving up to PCIe 6.0 / CXL 3.0. Here are some other juicy tidbits, from the above article (17% IPC boost on SPECint!): Reply

abufrejoval Funny how that piece of exciting news is so utterly boring to me, not just as a consumer, but also as a former technical architect. The scale you need to make these worth having is so far beyond anything a technically minded individual might be responsible for, it can really only appeal to bean-counters, or perhaps a very abstract mind. I've thrived on hands-on and the confidence I had after trying to break things. Breaking these would be way above my pay grade, so you'd have to use blind faith. That turns to cause bigger messes, not guarantee fewer. Reply

abufrejoval bit_user said: So, reviewing what they've previously disclosed about, I see that mentions of AMX, AVX-512, and AVX10 are conspicuously absent. These aren't meant to replace P-core Xeons, but front-end ARMs. I'd have been surprised to see AMX there, not even sure there is much of any floating-point in front-ends. Quite honestly it's the kind of workload I'd have expected to move into network ASICs as IP blocks, but that's a hosting customer decision and I'm not sure which hyperscalers expose programmable fabrics today to customers. Reply

abufrejoval I am a bit sceptic when it comes to this giagantism. On one hand, even chips much bigger are likely to never catch everyones scale-up requirements, so scale-out needs to be part of the design anyway. When you do scale-out, putting more eggs in a basket creates a conflict between the cost of consolidation vs the cost of administering more instances. Finding that sweet spot won't be easy and you may need to maintain that across several generations outside a physical server, since complete slash-and burn or green-field deployments aren't normal beyond the 1st build-out. Well good thing, that's no longer my job! Reply

bit_user abufrejoval said: These aren't meant to replace P-core Xeons, but front-end ARMs. The article pretty clearly indicated they had it, which is why I was searching for confirmation. abufrejoval said: not even sure there is much of any floating-point in front-ends. Yes, there very much is! Vector/FP is the area where Skymont improved the most over Crestmont/Gracemont, and even those had AVX2. Reply

bit_user abufrejoval said: I am a bit sceptic when it comes to this giagantism. On one hand, even chips much bigger are likely to never catch everyones scale-up requirements, so scale-out needs to be part of the design anyway. I think that's why mainstream servers have only 2 sockets. The main benefit of more cores/server is that you get higher density and it requires less infrastructure per core. That seems to be the main argument behind scaling up core counts per (server) CPU. Reply

thestryker Darkmont, based on slides, appears to be identical to Skymont for the most part. Seems like maybe some additional math instruction. Videocardz has the slide deck: https://videocardz.com/newz/intel-announces-xeon-6-clearwater-forest-at-mwc-2026-core-200k-likely-the-next-plus Reply

bit_user thestryker said: Darkmont, based on slides, appears to be identical to Skymont for the most part. Seems like maybe some additional math instruction. Oh, good point. I see now that Skymont also has a 416-entry OoO window, so they must've been comparing Darkmont to Gracemont. Chips & Cheese's Skymont details also align with the Darkmont slide's claims about increases in load & store AGUs. https://chipsandcheese.com/p/skymont-intels-e-cores-reach-for-the-sky Reply

thestryker bit_user said: The main benefit of more cores/server is that you get higher density and it requires less infrastructure per core. That seems to be the main argument behind scaling up core counts per (server) CPU. These are as close to perfect as it gets for communications edge which is probably why the MWC announcement. Between the density and accelerators I'd imagine these will be hard to beat outside of bespoke solutions. Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/cpus/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/cpus/intels-make-or-break-18a-process-node-debuts-for-data-center-with-288-core-xeon-6-cpu-multi-chip-monster-sports-12-channels-of-ddr5-8000-foveros-direct-3d-packaging-tech#main

- https://www.tomshardware.com

- Save $190 when you bundle AMD's new 9850X3D CPU, Gigabyte X870 motherboard, and fast 1TB SN850X SSD for $839 — Newegg's new SSD bundles get you an SSD priced at

- AI memory crunch forces DRAM market into 'hourly pricing' model, report claims — small and medium-sized businesses fighting for survival

- Lenovo's Legion Go Fold-able gaming handheld concept has four screen modes, also works as a small laptop — POLED display unfolds from 7.7 to 11.6 inches

- Survey Reveals AI Advances in Telecom: Networks and Automation in Driver’s Seat as Return on Investment Climbs

- [Daily Due Diligence] NVDA NVDA

Informational only. No financial advice. Do your own research.