When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

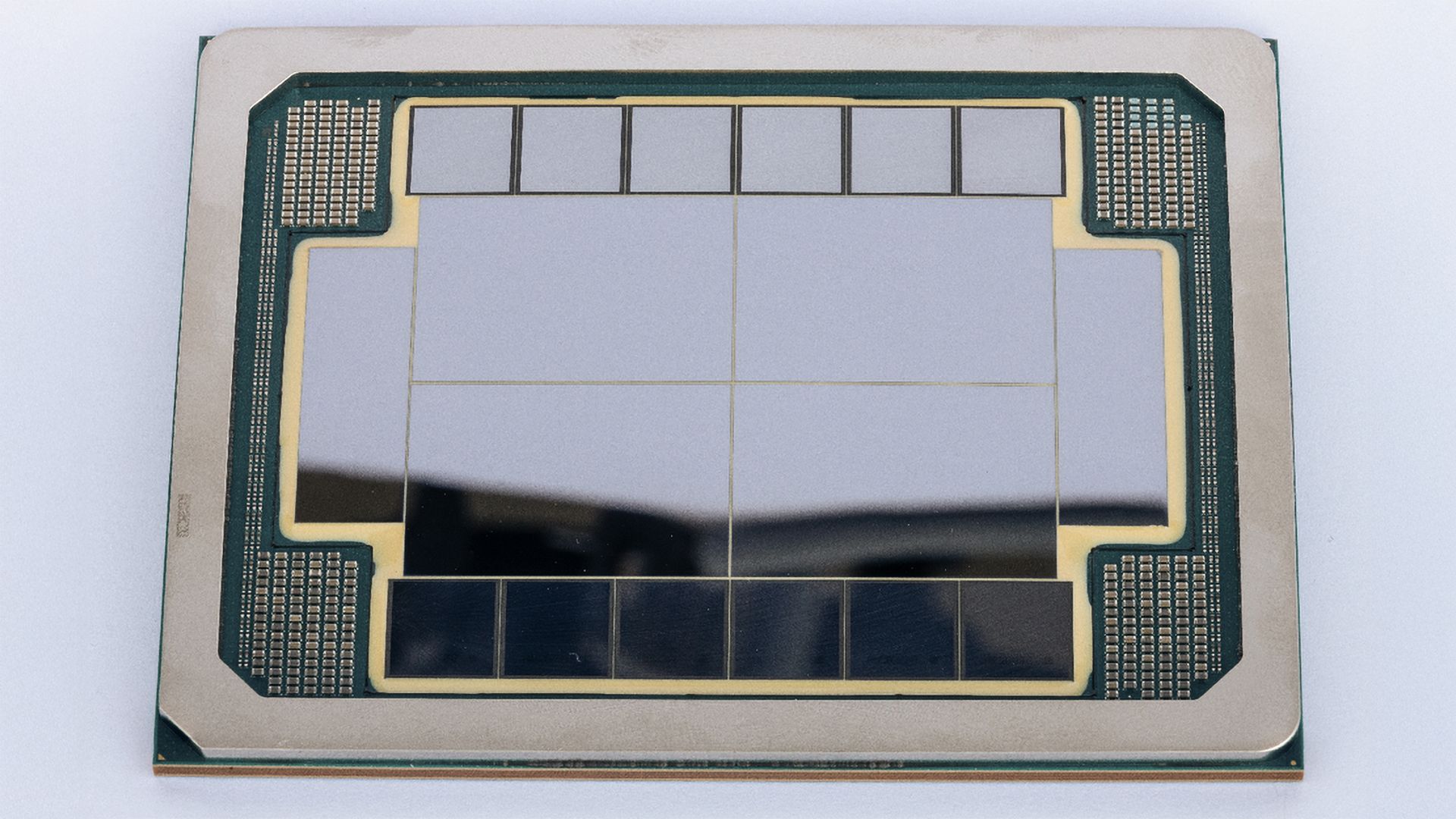

(Image credit: Intel) Share Share by: Copy link Facebook X Whatsapp Reddit Pinterest Flipboard Email Share this article 1 Join the conversation Follow us Add us as a preferred source on Google Intel Foundry this week released a promotional document aimed at detailing its leading-edge front-end and back-end offerings for AI and HPC applications, and showcased its 'AI chip test vehicle' that demonstrates the company's current packaging capabilities. Indeed, they are quite impressive as the company is showing off an 8 reticle-sized system-in-package (SiP) that features four logic tiles, 12 HBM4-class stacks, and two I/O tiles. More importantly, unlike the massive concept with 16 logic tiles and 24 HBM5 stacks the company presented last month , this one is actually manufacturable today.

First and foremost, it is necessary to note that what Intel Foundry is showcasing is not a working AI accelerator, but rather an 'AI chip test vehicle' that shows how future AI and HPC processors can be physically built (or rather assembled). To a large degree, the company is demonstrating its entire construction method that combines large compute tiles, stacks of high-bandwidth memory, ultra-fast chip-to-chip links, and a new class of power delivery into one manufacturable package. This package differs significantly from what TSMC offers today (more on this later). In short, the concept shows that next-generation heavy-duty AI processors are multi-chiplet designs, and Intel Foundry can build them.

At the heart of this platform are four large logic tiles allegedly built on Intel 18A process technology (hence featuring RibbonFET gate-all-around transistors and PowerVia backside power delivery) that are flanked by HBM4-class memory stacks and I/O tiles and presumably stitched together with EMIB-T 2.5D bridges embedded directly in the package substrate. Intel uses EMIB-T, which adds through-silicon vias inside the bridges so that power and signals can pass vertically as well as laterally, to maximize interconnection density and power delivery. Logically, the platform is designed for UCIe die-to-die interfaces running at 32 GT/s and beyond, which are also seemingly used to attach presumably C-HBM4E stacks.

Intel displays tech to build extreme multi-chiplet packages 12 times the size of the largest AI processors, beating TSMC's biggest

TSMC's CoWoS packaging capacity reportedly stretched due to AI demand

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/semiconductors/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/semiconductors/intel-shows-off-leading-edge-tech-with-massive-ai-processor-test-vehicle-huge-chip-features-four-logic-tiles-12-hbm4-stacks-and-8x-reticle-size#main

- https://www.tomshardware.com

- GeForce NOW Brings GeForce RTX Gaming to Linux PCs

- SK hynix invests $10 billion in creating a U.S.-based 'AI solutions' company — company to restructure California-based Solidigm enterprise SSD brand to bolster

- Intel shows off leading-edge tech with massive AI processor test vehicle — huge chip features four logic tiles, 12 HBM4 stacks, and 8X reticle size

- Samsung's Taylor, Texas fab could herald a breakthrough for the chipmaker, company plans 2026 risk production — new production flows, pellicles for EUV patterni

- Land an RTX 5080 gaming PC deal with 64GB of RAM for just $2,279 — this factory-reconditioned rig saves you hundreds of dollars, RAM and GPU alone worth $1,938

Informational only. No financial advice. Do your own research.