Follow Tom's Hardware on Google News , or add us as a preferred source , to get our latest news, analysis, & reviews in your feeds.

Jake Roach is the Senior CPU Analyst at Tom\u2019s Hardware, writing reviews, news, and features about the latest consumer and workstation processors. ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-18/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Jake Roach Social Links Navigation Senior Analyst, CPUs Jake Roach is the Senior CPU Analyst at Tom’s Hardware, writing reviews, news, and features about the latest consumer and workstation processors.

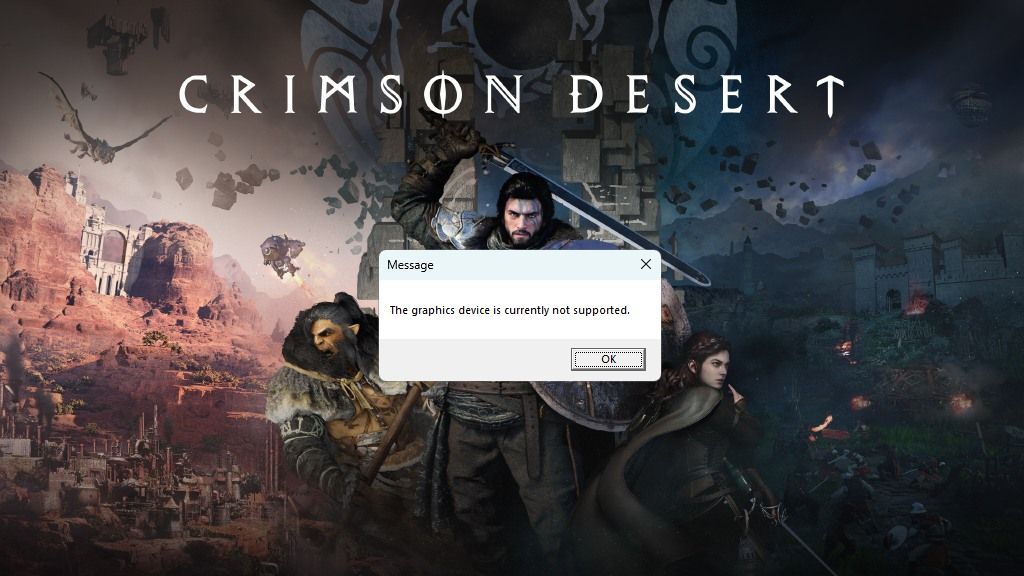

chaos215bar2 Of course the blame is on the developer. The entire reason we have standardized graphics APIs is so that any game can run on any hardware meeting minimum support requirements without having to be built to run on specific GPUs. First, you build out the baseline functionality to the standard. Then, maybe, you add on extra bells and whistles for specific GPUs with proprietary extensions: Something seems to be fundamentally backwards about Crimson Desert's engine design if it's just giving up when running on hardware that hasn't been explicitly whitelisted. If the game was running, but hitting weird glitches or crashes, then maybe the blame could lie with Intel. Reply

usertests chaos215bar2 said: Something seems to be fundamentally backwards about Crimson Desert's engine design if it's just giving up when running on hardware that hasn't been explicitly whitelisted. They have their own bespoke engine: https://wccftech.com/crimson-desert-different-level-unreal-engine-5/ I agree with your sentiment. There shouldn't have to be driver updates just for games to run or even be optimized. It's a bad situation that certainly isn't making it easier for competition in the GPU market. What if a game was released and it just ran on Nvidia, AMD, Intel, Apple, Imagination, Lisuan, etc. Reply

Penfolduk Whilst in theory the standard APIs should make games agnostic to the type of graphics cards used, in practise it seems that games all seem to need hardware specific tweaks. And no one ever explains why. Is it that the underlying hardware architechtures are so different it requires different approaches with the APIs to get decent results out of them? Or are Devs effectively being bribed to favour one graphics architecture over another? And what does happen if you just write code that just uses API calls and is otherwise hardware agnostic? Reply

Cwiiis Penfolduk said: And no one ever explains why. Is it that the underlying hardware architechtures are so different it requires different approaches with the APIs to get decent results out of them? You've hit the nail on the head. Each GPU design is quite significantly different from the next and regardless of the hardware-agnostic API, different usage patterns can behave very differently on different hardware. I'm not up on the most modern GPUs, but if you aren't regularly testing on day one on all architectures, you can go far down a path that will be hard to rectify after the fact. For example, you may rely throughout your graphics pipeline on a certain usage of GPU compute that works significantly more efficiently on one architecture than another. With respect to Intel specifically, I expect it's a little bit of that (it seems like an interesting design that rewards smart usage of memory access and has excellent large transfer performance – but perhaps doesn't do so well with cache misses?) and a little bit of a premature driver issue (they've made great strides, but Nvidia and AMD have an insurmountable head start and huge market support). Reply

rluker5 This sounds like a whitelist issue. Basically all games made before Arc was released weren't made to work with Intel dGPUs and basically all of them work on Intel dGPUs. GPUs aren't that different when it comes to raster. As evidence game mods care more about the game launcher than GPU brand when it comes to functionality. Fallout 3 has a whitelist and to use an Intel iGPU you need to add a file so the game thinks it is some weak Nvidia GPU. Then it runs fine. Can't make a game work if your brand is banned. Unless you have a mod to trick the game into lifting the ban. Reply

coolitic Penfolduk said: Whilst in theory the standard APIs should make games agnostic to the type of graphics cards used, in practise it seems that games all seem to need hardware specific tweaks. And no one ever explains why. Is it that the underlying hardware architechtures are so different it requires different approaches with the APIs to get decent results out of them? Or are Devs effectively being bribed to favour one graphics architecture over another? And what does happen if you just write code that just uses API calls and is otherwise hardware agnostic? You'd think you'd at least have a mode where you don't use vendor-specific tweaks, and just follow the specification in the most painless manner. Also, yes, certain GPU architectures have some preference for certain patterns, but it's also hard for me to see as that causing anything other than performance problems. Reply

mrdoc22 Then we are back to 1992, with no standard graphicsdrivers to control the graphcscard, and must use "glide api" for control the "Voodoo" card. It was that Windows resolved with standard API "direct3D" for controlling the graphicscard. If they having problems with using a standard graphics API, they have some serious problems with they sourcecode for the game. (If the graphicscard have some extra features, then it's a option to use them, it should not be a requirement to play the game) Reply

yngndrw Penfolduk said: Whilst in theory the standard APIs should make games agnostic to the type of graphics cards used, in practise it seems that games all seem to need hardware specific tweaks. And no one ever explains why. Is it that the underlying hardware architechtures are so different it requires different approaches with the APIs to get decent results out of them? Or are Devs effectively being bribed to favour one graphics architecture over another? And what does happen if you just write code that just uses API calls and is otherwise hardware agnostic? In a word, performance. Anybody can make a game which works fine across all of the available GPUs (As long as there are no driver bugs), but if you want to push limits, then performance optimisation is key. These performance optimisations can be very specific to a particular architecture and are more important than ever with geometry shaders and ray tracing. Then of course you have manufacturers sponsoring a game, to ensure that they spend more time optimising for their platform. Reply

beyondlogic If intel has been snubbed for bending over backwards then that's just a poor showing by the company ATM intel has bought good faith in making the current GPUs there decent for the price it's a shame it hasn't netted more market share but I get a feeling with the strides there doing in software side that could change. At least there not releasing house fire cards. Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/gpus/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/gpus/intel-suggests-it-was-snubbed-by-crimson-desert-dev-after-reaching-out-many-times-about-arc-gpus-company-says-it-provided-early-hardware-drivers-and-engineering-resources-to-studio#main

- https://www.tomshardware.com

- Optiscaler team fixes INT8 FSR 4 ghosting on RX 6000 series GPUs — adds support for the latest Adrenalin drivers

- Arctic's $1,400 AMD Strix Point fanless mini-PC hides under your desk — Senza AI 370 features Ryzen AI 9 HX 370 CPU, 32GB RAM, and 1TB SSD

- As Open Models Spark AI Boom, NVIDIA Jetson Brings It to Life at the Edge

- Kioxia announces new Super High IOPS SSD that helps accelerate AI workloads on Nvidia GPUs — 25.6TB drive provides more GPU-accessible memory for faster data ac

- New NVIDIA Nemotron 3 Super Delivers 5x Higher Throughput for Agentic AI

Informational only. No financial advice. Do your own research.