When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

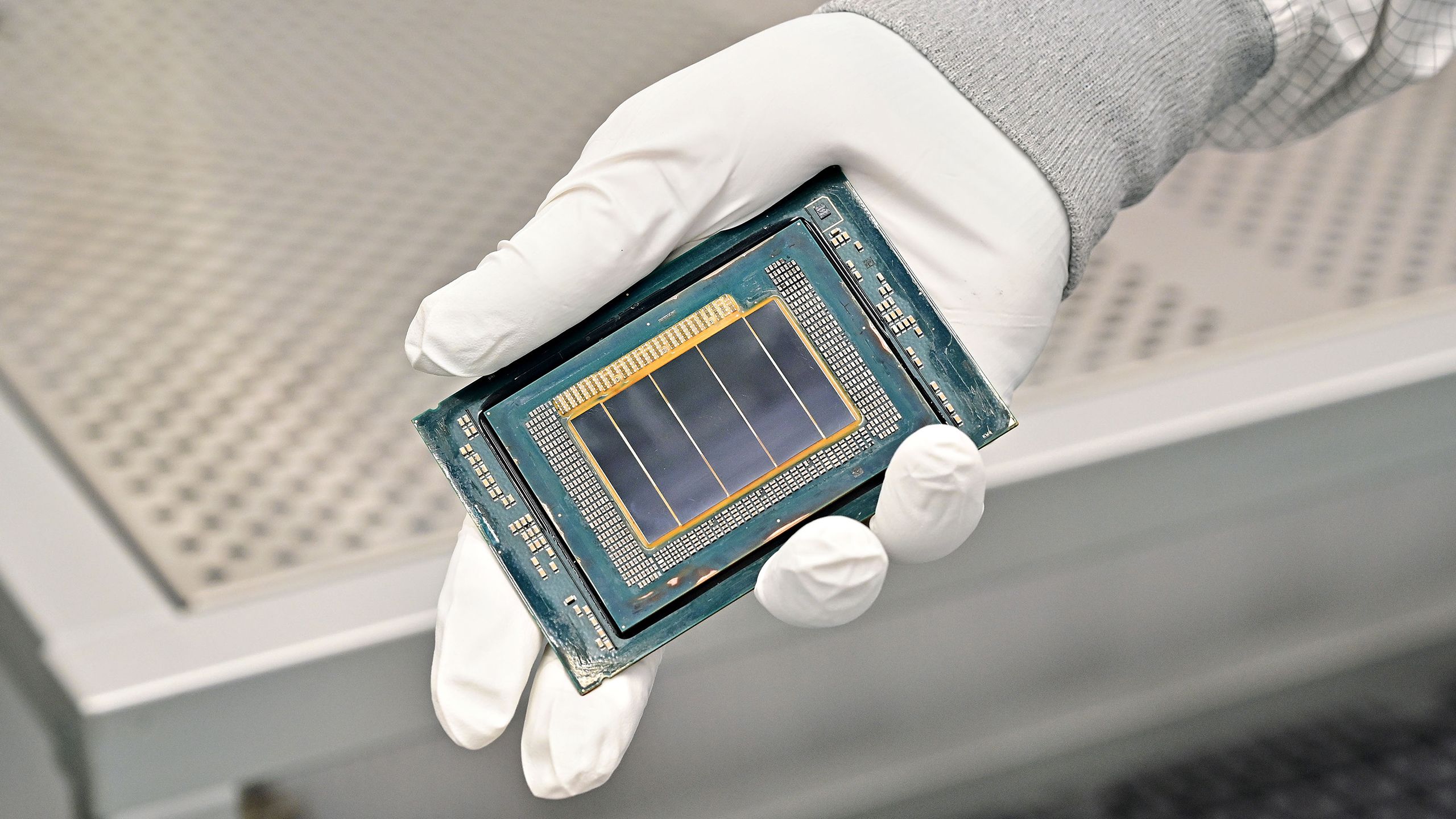

Intel announced today at Nvidia GTC 2026 in San Jose that its Xeon 6 processor will serve as the host CPU in Nvidia's DGX Rubin NVL8 systems, extending the x86 pairing the two companies established with the Xeon 6776P in current DGX B300 Blackwell-based platforms.

The DGX Rubin NVL8 is Nvidia's next-generation flagship AI server system. In that configuration, the host CPU is responsible for task orchestration, memory management, scheduling, and data movement to the GPU accelerators. With inference workloads shifting toward agentic AI and reasoning systems, those functions place increasingly heavy demands on per-core performance and memory bandwidth.

Intel said Xeon 6 addresses those demands through a combination of memory capacity, bandwidth, and I/O capabilities. The platform supports up to 8TB of system memory, which Intel cited as key for supporting large language models with growing key-value caches.

You may like Intel's make-or-break 18A process node debuts for data center with 288-core Xeon 6+ CPU Nvidia unveils details of new 88-core Vera CPUs positioned to compete with AMD and Intel Intel returns to boxed workstation CPUs with Xeon 600 — Granite Rapids WS delivers up to 86 cores, 4TB of memory, and 128 PCIe 5 lanes Meanwhile, memory bandwidth has improved 2.3 times generation-on-generation via MRDIMM technology, raising the rate at which data reaches the GPU accelerators. PCIe 5.0 lanes handle high-bandwidth accelerator connectivity, and a feature Intel calls Priority Core Turbo dedicates strong single-thread performance to orchestration, scheduling, and data movement tasks, keeping GPU utilization high as workload complexity increases.

Security coverage extends across the CPU-to-GPU data path through Intel Trust Domain Extensions (TDX), which adds hardware-rooted isolation and attestation via an Encrypted Bounce Buffer. Intel said end-to-end confidential computing is increasingly required as AI inference scales across data center, cloud, and edge deployments. Xeon 6 also now supports Nvidia Dynamo, an inference orchestration framework that enables heterogeneous scheduling across CPU and GPU resources within the same cluster.

"In this new era, the host CPU is mission-critical," said Jeff McVeigh, corporate vice president and general manager of Data Center Strategic Programs at Intel. "It governs orchestration, memory access, model security, and throughput across GPU-accelerated systems."

Intel also cited Xeon's x86 software ecosystem and enterprise deployment history as factors in the selection, noting compatibility with existing AI software stacks. The DGX Rubin NVL8 configuration builds on the same architectural foundation as DGX B300, giving operators platform continuity between Blackwell and Rubin generations.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/cpus/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/cpus/intel-xeon-6-selected-as-host-cpu-for-nvidia-dgx-rubin-nvl8-systems#main

- https://www.tomshardware.com

- Nvidia unveils details of new 88-core Vera CPUs positioned to compete with AMD and Intel – new Vera CPU rack features 256 liquid-cooled chips that deliver up to

- ‘Clean-room reimplementation’ of DR-DOS hits early beta, modernizing the operating system 38 years after its debut — runs Doom, Warcraft, SimCity, and other per

- ABB Robotics Taps NVIDIA Omniverse to Deliver Industrial‑Grade Physical AI at Scale

- US gov't revokes controversial AI hardware export rule that would mandate investments from foreign companies — new export rules are still in the works, though

- ABB Robotics Taps NVIDIA Omniverse to Deliver Industrial‑Grade Physical AI at Scale

Informational only. No financial advice. Do your own research.