AMD CES 2026 gaming trends press Q&A roundtable transcript — 'we see a little bit of an uptick in the percentage of AM4 versus AM5 platforms'

Meanwhile, a bigger, faster Local Data Share (LDS) — an on-chip scratchpad memory inside each compute unit — “improves the utilization of the newly expanded matrix compute unit with extensive on-chip data reuse,” Adaikkalavan explains. The LDS is substantially larger in the MI335X-series compared to the MI300X-series, coming in at 160KB per CU versus 64KB, with double the bandwidth.

During matrix multiply-accumulate operations, the LDS feeds data directly to the matrix compute units, and a larger LDS reduces how often the GPU has to reach out to slower memory tiers to reload operand data. AMD also added a direct LDS load path from the L1 data cache that eliminates intermediate register usage, reducing memory latency for these operations further.

AMD submitted the MI355X to MLPerf Inference v5.1, where it achieved 93,045 tokens per second on the Llama 2 70B benchmark — a 2.7x improvement over the MI325X. In internal throughput comparisons, running FP4 inference against the MI300X's FP8 results, AMD showed roughly a threefold improvement in token generation across DeepSeek R1, Llama 4 Maverick , and Llama 3.3 70B.

It’s worth noting that those figures pit the MI355X's FP4 results against the MI300X's FP8, and the MI300X never supported FP4 . So, while this data does demonstrate a generational improvement in practice, it doesn’t isolate hardware from software and data format improvements.

The training comparison against Nvidia has a similar caveat. AMD's data shows the MI355X completing a Llama 2 70B LoRA fine-tuning run in 10.18 minutes, versus 11.15 minutes for the GB200 —about 10% faster. AMD's result came from MLPerf Training v5.1 using FP4, while the Nvidia figure is the GB200's last published FP8 score from MLPerf Training v5.0; Nvidia has not submitted a comparable FP4 training result.

Adaikkalavan was candid about what the parity result reflects: "We are actually matching the performance of the more expensive and complex GB200. It tells you a couple of things. One, we have strong hardware, which we always knew. And second, the open software frameworks have made tremendous progress."

AMD's reckoning shows the MI355X carries 288GB of HBM3E against the B200's 192GB, and delivers roughly double the FP64 throughput — 2.1x compared to the B200. For general inference workloads, the two accelerators are at rough parity. The MI355X's larger memory pool its most consistent advantage for running large models without distributing them across multiple GPUs.

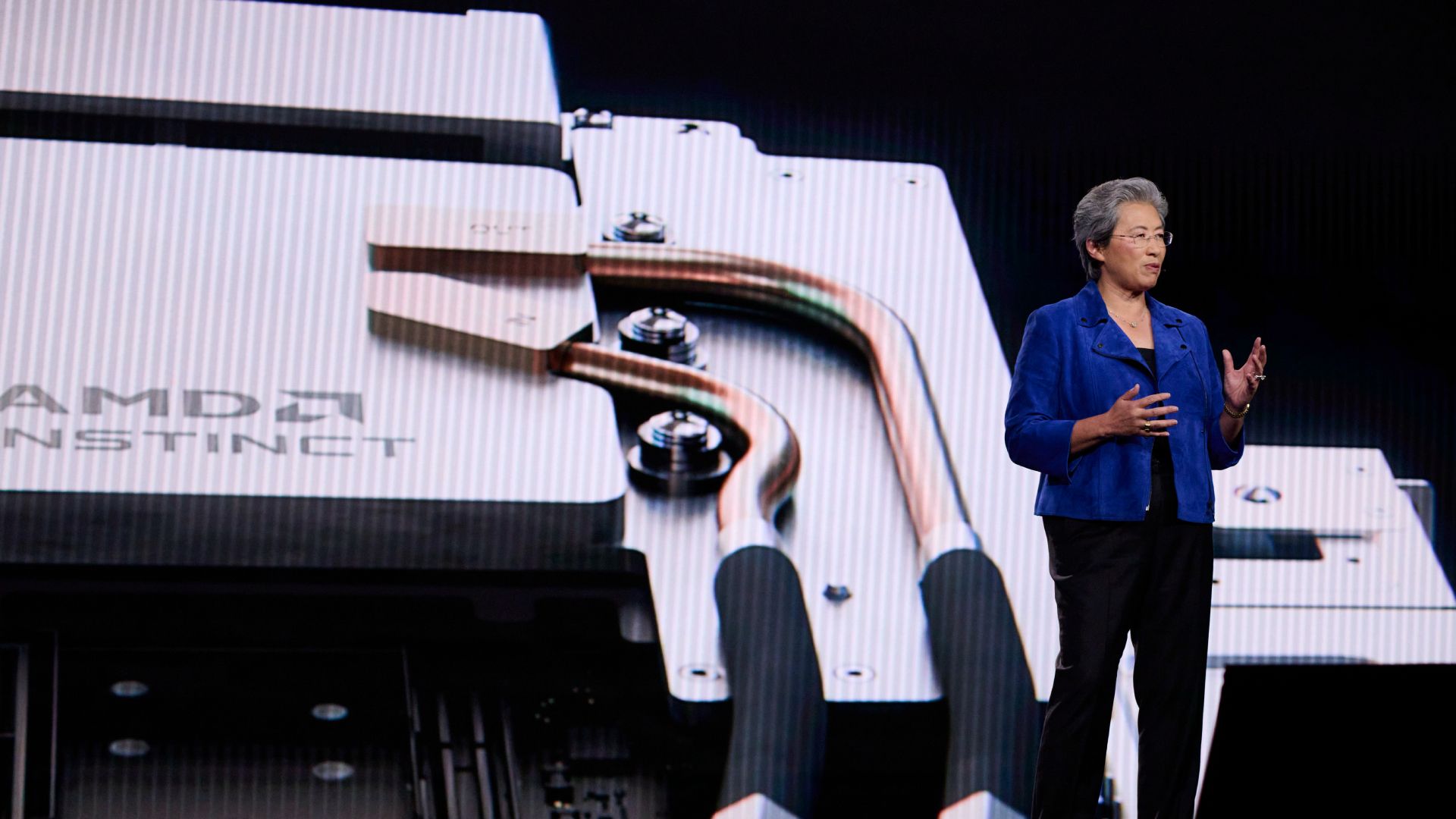

Both the MI350X (1,000W TBP, 2,200 MHz) and the flagship MI355X (1,400W TBP, 2,400 MHz) maintain the same physical form factor as the MI300X. AMD built that constraint into the project from the start, designing the entire CDNA 4 generation to function as a drop-in infrastructure upgrade for existing MI300-based servers rather than requiring new rack designs or cooling infrastructure.

With the MI400-series waiting in the wings, however, the MI350 series will soon play second fiddle. The MI400 is built on TSMC's N2 process, with 432GB of HBM4 and roughly double the compute. AMD continues to promise those chips for the second half of this year. But in a world where every AI FLOP is potentially valuable, both AMD and its customers will likely continue to optimize performance on the MI350 family for some time to come.

Luke James is a freelance writer and journalist.\u00a0 Although his background is in legal, he has a personal interest in all things tech, especially hardware and microelectronics, and anything regulatory.\u00a0 ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-17/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Luke James Social Links Navigation Contributor Luke James is a freelance writer and journalist. Although his background is in legal, he has a personal interest in all things tech, especially hardware and microelectronics, and anything regulatory.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/semiconductors/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/semiconductors/inside-the-instinct-mi355x#main

- https://www.tomshardware.com

- NVIDIA Brings AI-Powered Cybersecurity to World’s Critical Infrastructure

- Klevv Cras V RGB DDR5-9600 C46 2x48GB review: Binned for pure speed, not your wallet

- Save $465 and get an 8TB SSD for $715 when paired with an RTX 5070 — Newegg Combo gets you a new GPU and a WD Black SN850X at pre-RAM crunch prices

- Imec's new post-exposure bake method speeds up EUV chipmaking tools, boosting production for the most advanced chips — 20% gain in photoresist improvement from

- Horror Awakens in the Cloud: GeForce NOW Unleashes Capcom’s ‘Resident Evil Requiem’

Informational only. No financial advice. Do your own research.