Intel's roadmap adds mysterious 'hybrid' AI processor featuring x86 CPUs, dedicated AI accelerator, and programmable IP

The on-chip 2D network-on-chip (NoC) uses a straightforward XY routing scheme, so packets first travel along one axis and then the other, with turn restrictions applied to avoid deadlocks. Arbitration inside routers is handled using a weighted round-robin mechanism, so traffic from different sources gets serviced fairly, but with adjustable priority. The quality-of-service weights can be modified at runtime to make the system favor certain traffic types depending on whether the workload is compute-heavy or memory-intensive.

(Image credit: Rebellions) (Image credit: Rebellions) (Image credit: Rebellions) The 2D NoC mesh inside each chiplet logically expands over UCIe, so the full quad-chiplet system-in-package behaves like one large mesh-connected processor on the logical level. Keeping in mind the low chiplet-to-chiplet latency (or rather FDI-to-FDI latency), this greatly simplifies life for software developers. Interestingly, while all chiplets feature three UCIe-A interfaces for versatility (or maybe redundancy?), the full configuration scales to 256 routers across the entire mesh, so it remains to be seen whether Rebellions can build accelerators with more than four chiplets using the existing architecture.

Although the UCIe 1.0 specifications include mappings for the CXL.io, CXL.mem, and CXL.cache protocols on top of a PCIe 6.0 interconnection, those are optional protocol mappings, not mandatory requirements. The spec also supports vendor-defined streaming and memory-semantics protocols, which is exactly what Rebellions did with the Rebel 100.

Rebellions built a fairly aggressive data-movement engine to keep its quad-chiplet design fed. Each NPU die integrates a configurable DMA subsystem with eight execution engines that can pull data from local HBM3E, remote HBM3E located on another chiplet, or from distributed shared memory. Bandwidth per DMA can reach up to 2.6 TB/s, which is arguably enough for an inference-focused accelerator. Meanwhile, to prevent certain tasks from starving others, the company implemented task-level QoS controls designed to reduce long-tail latency and avoid congestion when different workloads are running simultaneously.

Coordinating work across four chiplets requires careful synchronization. But instead of relying on a dedicated scheduler, Rebellions implemented synchronization managers in each NPU instead. Each chiplet integrates a dedicated hardware synchronization manager with hardwired control logic that can coordinate activity across dies, either under centralized control or in a more autonomous manner. The architecture specifically avoids direct peer-to-peer communications between units and inter-unit dependencies to cut down unnecessary traffic and coordination overhead and keep overall utilization high during different execution phases of LLM inference.

To improve the reliability of its die-to-die interface, in addition to standard UCIe functionality, Rebellions implemented multiple loopback modes, transaction-level tracking, and channel-level diagnostics, which are generally intended to simplify validation and fault isolation in a multi-die package during debugging. For commercial deployments, Rebellions added a configurable switching mode that uses the aforementioned features to sacrifice a small amount of performance in exchange for improved MTBF and MTTF characteristics to maximize uptime, which is important for large AI clusters where uptime matters more than marginal throughput gains.

The Rebel 100 accelerator is rated for a thermal design power 600W TDP, but instantaneous transient surges — when multiple neural cores switch on — exceed the nominal level by two times. As currents rise quickly and sharply, they create voltage dips, which poses significant challenges for power integrity of the quad-chiplet AI accelerator.

(Image credit: Rebellions) (Image credit: Rebellions) To mitigate this, Rebellions implemented a hardware staggering technique that offsets start times of neural cores instead of activating them simultaneously, which smooths current ramps and reduces supply noise. Measurements show that synchronized switching produces steep current spikes and noticeable voltage disturbance, whereas staggered activation results in gentler transitions and a more stable power rail, according to Rebellions. Additional control logic dynamically limits instruction issue rate over short time windows to further reduce sudden load changes both within a chiplet and across dies.

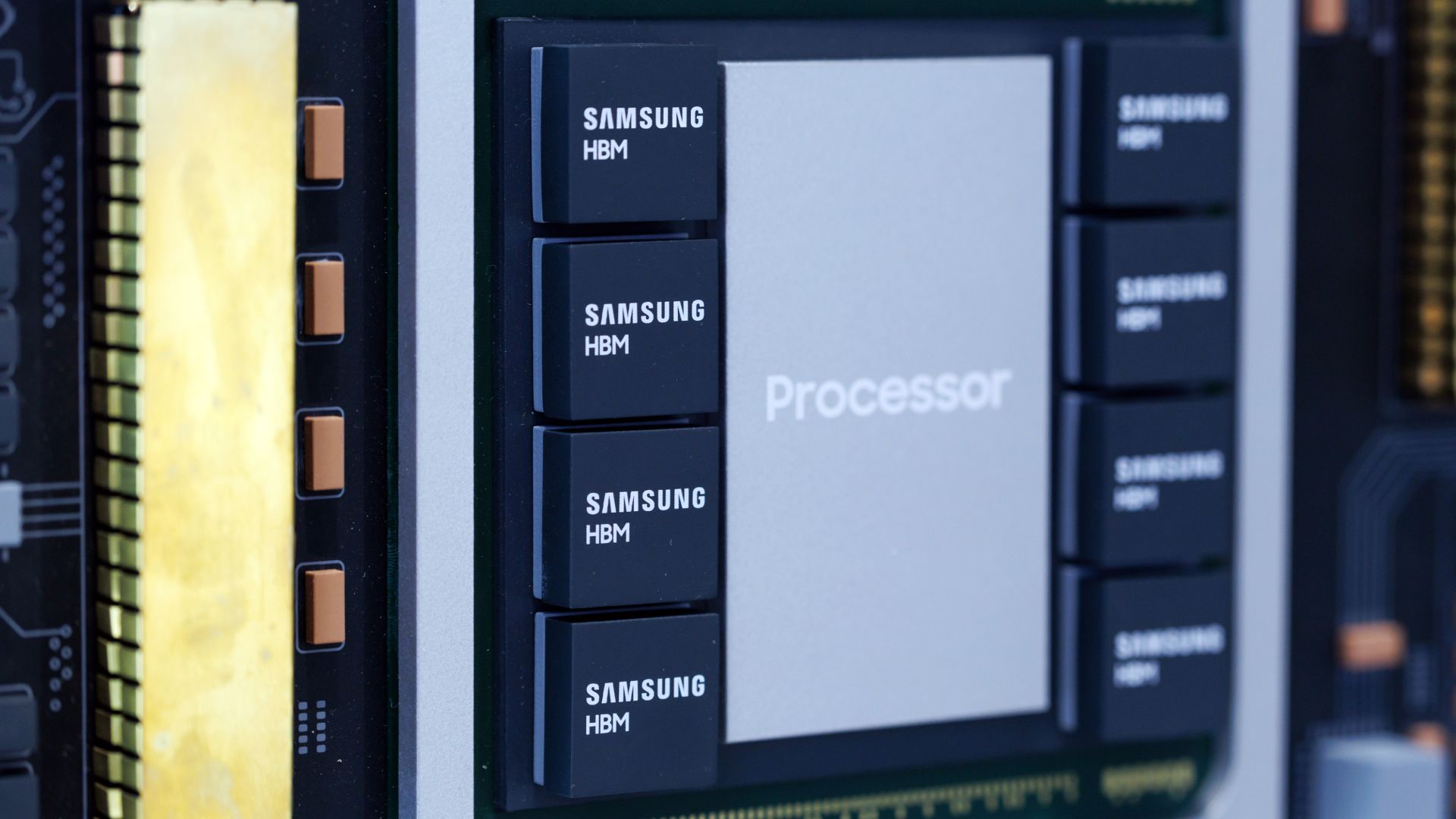

Memory traffic adds another layer of stress. HBM3E bursts can be just as demanding as compute surges, which puts extra strain on the power delivery network. To reinforce it, Rebellions added dedicated integrated silicon capacitor (ISC) dies that embed distributed capacitance across the VDD rails to serve both the NPU and the HBM3E PHY. This approach further dampens voltage oscillations and lowers impedance peaks compared to a design without ISC dies.

With its very first Rebel 100 multi-chiplet AI inference accelerator, Rebellions has managed to achieve similar performance to Nvidia's H200 at a lower power, albeit with a considerably higher consumption of silicon. An even bigger breakthrough for the company is that the Rebel 100 SiP, which is one of the industry's first multi-chiplet accelerators to use UCIe-A interconnects.

Instead of building two large reticle-size dies, Rebellions opted for a quad-chiplet design with four 320-mm2 dies that are much easier to develop and yield, especially keeping in mind Samsung's pellicle-less approach to EUV that does not particularly favor large dies. To make the quad-chiplet design work seamlessly, Rebellions developed an internal 2D mesh network-on-chip that logically expands beyond the chiplet's boundaries over UCIe so the full quad-chiplet system-in-package behaves like one large mesh-connected processor.

To further optimize its design, Rebellions did not adopt standard CXL-based protocols but implemented its own configurable DMA subsystem and synchronization managers. Furthermore, to ensure power integrity, it implemented a proprietary hardware staggering technique that smooths current ramps and reduces supply noise. On top of that, the company added integrated silicon capacitor (ISC) dies to dampen voltage fluctuations and lower impedance peaks.

While not using the UCIe 1.0 specification to its full extent, the Rebel 100 represents a good example of a multi-chiplet design that relies on industry-standard interconnection while still using proprietary techniques to maximize performance and optimize the power of the system-in-package.

Anton Shilov is a contributing writer at Tom\u2019s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends. ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-18/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Anton Shilov Social Links Navigation Contributing Writer Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/semiconductors/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/semiconductors/isscc-2026-rebellions-ucie-rebel-100#main

- https://www.tomshardware.com

- AMD details Ryzen AI 400 desktop with up to 8 cores, Radeon 860M graphics — APUs won’t be available as boxed units, only in OEM systems

- Gigabyte's huge 55-inch 4K 120Hz gaming monitor is now half price — grab this Android-powered display with HDMI 2.1 for just $9 per inch

- NVIDIA and Global Industrial Software Leaders Partner With India’s Largest Manufacturers to Drive AI Boom

- From Radiology to Drug Discovery, Survey Reveals AI Is Delivering Clear Return on Investment in Healthcare

- Rapidus secures $1.7 billion from Japan’s government and private investors for 2nm chip production — company says it is in active discussions with more than 60

Informational only. No financial advice. Do your own research.