When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

One thing that caught our attention during Jensen Huang's keynote at GTC 2026 on Monday was the lack of any mention of the Rubin CPX context phase accelerator that the company promoted last year as an important part of the Vera Rubin platform. The Rubin CPX was also absent from the slides demonstrated during the keynote, but the slides mention Nvidia's upcoming Groq 3 LPU processors and LPX racks , which may indicate that these processors are replacing the CPX in Nvidia's roadmap.

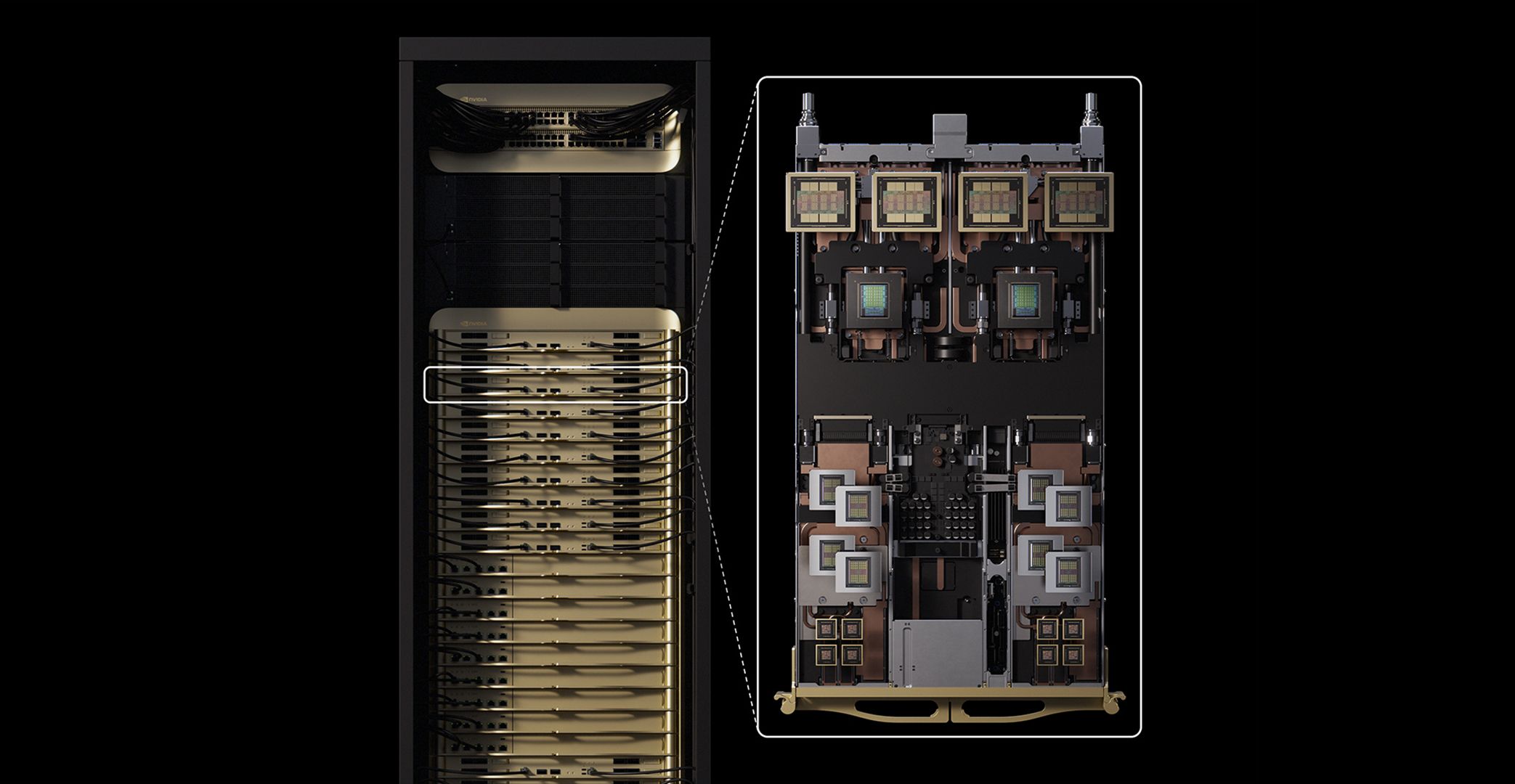

Nvidia's Rubin CPX GPU was meant to be a part of the company's Vera Rubin and Vera Rubin Ultra platforms. These GPUs were designed to accelerate an initial compute-intensive context phase of a query that processes the input to generate the first output token. The main advantage of the context phase accelerator was its reliance on GDDR7 memory, which does not offer extreme bandwidth like HBM3E or HBM4 but consumes dramatically less power, which was said to greatly improve the competitiveness of Nvidia's Rubin platform for inference workloads.

However, the slides demonstrated by Nvidia at GTC lack Rubin CPX products, but include Groq 3 LPU, which may indicate that the company is now more focused on the latter rather than the former.

You may like Nvidia Groq 3 LPU and Groq LPX racks join Rubin platform at GTC — SRAM-packed accelerator boosts 'every layer of the AI model on every token' Nvidia updates data center roadmap with Rosa CPU and stacked Feynman GPUs Nvidia's focus on rack-scale AI systems is a portent for the year to come

Nvidia's Groq 3 low-latency inference accelerators — which Nvidia calls LPUs — are designed to offer significant inference performance with extremely low latency, as it mainly relies on internal SRAM, which is by definition faster, lower latency, and lower power than any type of DRAM. For example, Nvidia's LP30 processor comes with 512 MB of SRAM and offers 1.23 FP8 PFLOPS performance, or 9.6 PFLOPS per Groq 3 LPX compute tray or 315 FP8 PFLOPS per rack. By contrast, the Rubin CPX accelerator was to deliver up to 30 NVFP4 PetaFLOPS of compute throughput, but with considerably higher latency.

For now, it remains to be seen whether Nvidia will actually offer its Rubin CPX accelerators or will refocus its efforts to Groq 3 LPU low-latency inference accelerators. Given Nvidia's recent $20 billion non-exclusive license acquisition of startup Groq's chip tech and talent , the move would make sense. The lack of Rubin CPX in roadmap slides and publicly favoring LPU processors is a rather clear indicator of the company's priorities. Nonetheless, it is possible that some of Nvidia's customers will deploy its CPX accelerators, as they have already invested in their deployment by tweaking their software for these processors. After all, off-roadmap parts are pretty common in the industry.

Follow Tom's Hardware on Google News , or add us as a preferred source , to get our latest news, analysis, & reviews in your feeds.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/gpus/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/gpus/nvidia-removes-rubin-cpx-accelerators-from-its-roadmap-groq-3-lpus-take-center-stage-as-cpx-is-removed#main

- https://www.tomshardware.com

- With H200s set to flow into China, Groq is reportedly set to follow — Nvidia is allegedly preparing a custom version of inferencing chip to penetrate region

- SK Group chairman says memory chip shortage will last until 2030 — wafer supply trails demand by 20%

- Grab 32GB of Corsair DDR5 RAM for just $111 in this epic Newegg combo with the 9850X3D — $1,020 bundle for an AMD gaming PC build includes an Asus X870E-E mothe

- Examining Nvidia's 60 exaflop Vera Rubin POD — how seven chips underpin company's 40 rack AI factory supercomputer

- Micron enters high-volume production of HBM4 for Nvidia Vera Rubin – 2.3x bandwidth improvement and 20% boost in power efficiency

Informational only. No financial advice. Do your own research.