When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

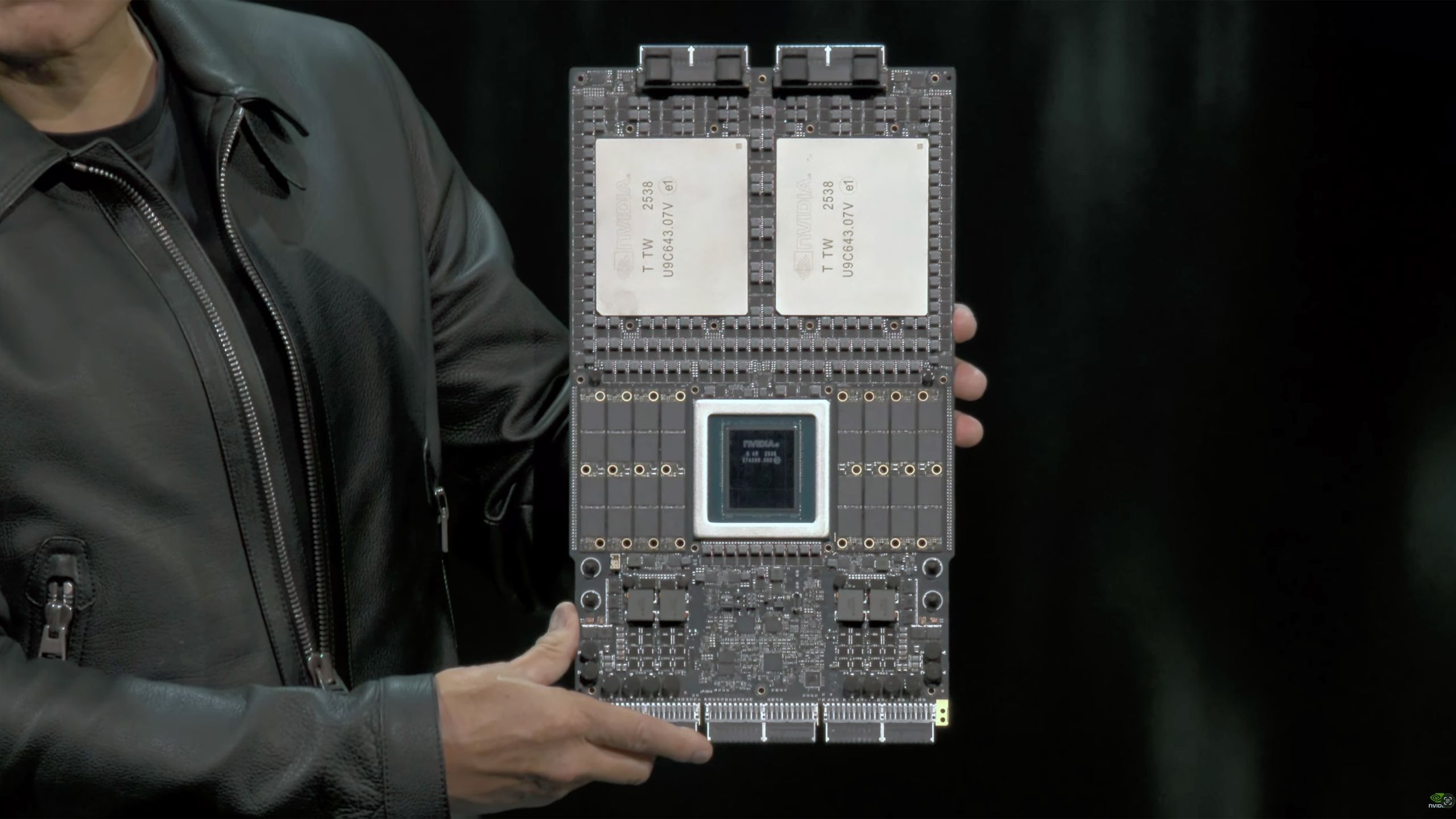

(Image credit: Nvidia/YouTube) Share Share by: Copy link Facebook X Whatsapp Reddit Pinterest Flipboard Email Share this article 3 Join the conversation Follow us Add us as a preferred source on Google Recently, Nvidia announced that it had initiated 'full production' of its Vera Rubin platform for AI datacenters, reassuring the partners that it is on track to launch later this year and introducing ahead of its rivals, such as AMD. However, in addition to possibly bringing the release forward, Nvidia is also reportedly revamping the specifications of the Rubin GPU to offer higher performance: reports suggest a TDP increase to 2.30 kW per GPU and a memory bandwidth of 22.2 TB/s .

The Rubin GPU's power rating has now been locked in at 2.3 kW, up from 1.8 kW originally announced by Nvidia, but down from 2.5 kW expected by some market observers, according to Keybanc (via @Jukan05 ). The intention to increase the power rating from 1.8 kW stems from the desire to ensure that this year's Rubin-based platforms are markedly faster compared to AMD's Instinct MI455X, which is projected to operate at around 1.7 kW. The information about the power budget increase for Rubin comes from an unofficial source, but it is indirectly corroborated by SemiAnalysis , which claims that Nvidia has increased the data transfer rates of HBM4 stacks, and now each Rubin GPU boasts a memory bandwidth of 22.2 TB/s, up from 13 TB/s. We have reached out to Nvidia to try to verify these claims.

An additional ~500W of power headroom gives Nvidia multiple options to improve real-world performance rather than just specifications on paper. Most directly, it would enable higher sustained clocks under continuous training and inference loads, as well as reduced throttling when the AI accelerator is fully stressed. That extra power would also make it easier to keep more execution units running at the same time, which boosts throughput in heavy workloads where compute, memory, and interconnect are all under load at once.

Nvidia CEO confirms Vera Rubin NVL72 is now in production

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/artificial-intelligence/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/artificial-intelligence/nvidia-reportedly-boosts-vera-rubin-performance-to-ward-hyperscalers-off-amd-instinct-ai-accelerators-increased-boost-clocks-and-memory-bandwidth-pushes-power-demand-by-500-watts-to-2300-watts#main

- https://www.tomshardware.com

- Microsoft CEO says AI needs to have a wider impact or else it risks quickly losing ‘social permission’ — also says that the technology should benefit more peopl

- From Warehouse to Wallet: New State of AI in Retail and CPG Survey Uncovers How AI Is Rewiring Supply Chains and Customer Experiences

- NVIDIA RTX Accelerates 4K AI Video Generation on PC With LTX-2 and ComfyUI Upgrades

- SSDs now cost 16x more than HDDs due to AI supply chain crisis — hybrid SSD + HDD datacenter deployments are now significantly cheaper to deploy than SSD-only e

- NVIDIA DLSS 4.5, Path Tracing and G-SYNC Pulsar Supercharge Gameplay With Enhanced Performance and Visuals

Informational only. No financial advice. Do your own research.