ekio A good news from Ngreedia ? Wow Not too early to see competition in the Arm64 market for laptops. I was wondering if ARM was a locked license to Qualcomm at this point… x86 is bloated and obsolete CISC isa with instructions and concepts from 50 years ago, aarch64 v9 is a RISC isa that is barely over a decade old. (In advance: Stop confusing arm the company and arm the ISA) Reply

Roland Of Gilead I've been wondering who would be first to the market with and ARM based SOC, with a more powerful GPU. If Qualcomm and SD aren't on the same trajectory, their iGPU's are just too weak to compete. It will be interesting to see real testing and performance numbers. Question to those in the know – Can ARM not use PCIe to make use of modern GPU's? Why does that seems so hard? Reply

bit_user Roland Of Gilead said: I've been wondering who would be first to the market with and ARM based SOC, with a more powerful GPU. If Qualcomm and SD aren't on the same trajectory, their iGPU's are just too weak to compete. It will be interesting to see real testing and performance numbers. Chips & Cheese interviewed the team lead of Qualcomm's newest Adreno in the Snapdragon X2: https://chipsandcheese.com/p/diving-into-qualcomms-upcoming-adreno I'm just now noticing this is the same guy who's jumping ship and heading to Intel! https://www.tomshardware.com/pc-components/gpus/eric-demers-leaves-for-intel-after-14-years-at-qualcomm-father-of-radeon-and-adreno-gpus-now-sits-at-lip-bu-tans-table Anyway, the point I wanted to make is that not only are they continuing to optimize their GPU IP, but that Snapdragon X2 Elite will also expand its memory datapath to 192-bit, which is half-way between the standard laptop/desktop spec of 128-bit and the 256-bit width utilized by Nvidia GB10/N1 and AMD's Strix Halo (Ryzen AI Max). On top of that, the higher-speed LPDDR5X they're using closes most of the remaining gap. And if that weren't enough, it will feature a rather large chunk of SRAM. As large iGPUs tend to be rather bottlenecked by memory bandwidth, these changes could result in a decent step up, for the X2. You can see more published details of the X2's GPU (and the rest of it), here: https://chipsandcheese.com/p/qualcomms-snapdragon-x2-elite Roland Of Gilead said: Question to those in the know – Can ARM not use PCIe to make use of modern GPU's? Why does that seems so hard? There needs to be a market, in order for such products to make sense. Until a market gets established for ARM-based gaming laptops, I think you won't see any with dGPUs. On a technical level, yes – dGPUs have been used in various ARM-based machines, ranging from Ampere workstations to Raspberry Pi 4 & 5 and others. https://www.jeffgeerling.com/blog/2023/ampere-altra-max-windows-gpus-and-gaming/ https://www.jeffgeerling.com/blog/2025/big-gpus-dont-need-big-pcs/ Reply

abufrejoval I guess if you're Nvidia you can afford to delay gen1 for a few years and then anounce gen2 for just a year later… But who'd buy a gen1 product when it's bound to be obsolete before it's dry? I know they have to show a roadmap and their ability to execute on it, but this isn't inclining me one bit towards Nvidia for an ARM laptop… unless they get the Linux part perfect, which should be a given. Somehow I fear it won't be on consumer hardware. Reply

ezst036 hwertz said: And more importantly it should have good Linux on ARM support. Almost certainly, no. Nvidia's biggest problem here is its graphics driver which is known to be highly unstable in Wayland sessions. Nvidia doesnt bother much with QAQC on the Wayland side and it shows. They'll get the performance up and then oh you're having graphical artifacting? We'll get back to you. Monitor shuts off? We'll get back to you. Sound stopped? Let's have a chat two years from now pal. All sorts of just weird and just entirely random stuff happens, random lockups and stuff. It doesn't happen on Nvidia/Xorg and it doesn't happen anywhere in Intel or AMD based systems with open source drivers. Some people incorrectly assume this behavior is because Wayland is still untested but that's not it. It's Nvidia's driver. This driver is terrible on the Wayland side. If there was any interest in Linux gaming on ARM from Nvidia they could have(or should have) been beefing up their GPU drivers and it would've been a huge talking point for them since it is a fact that Linux is now a fast-growing gaming platform. Poor stability on x86 Nvidia and Wayland means Nvidia isn't going to bother fixing ARM on Wayland in their buggy graphics driver for such a niche use case. Reply

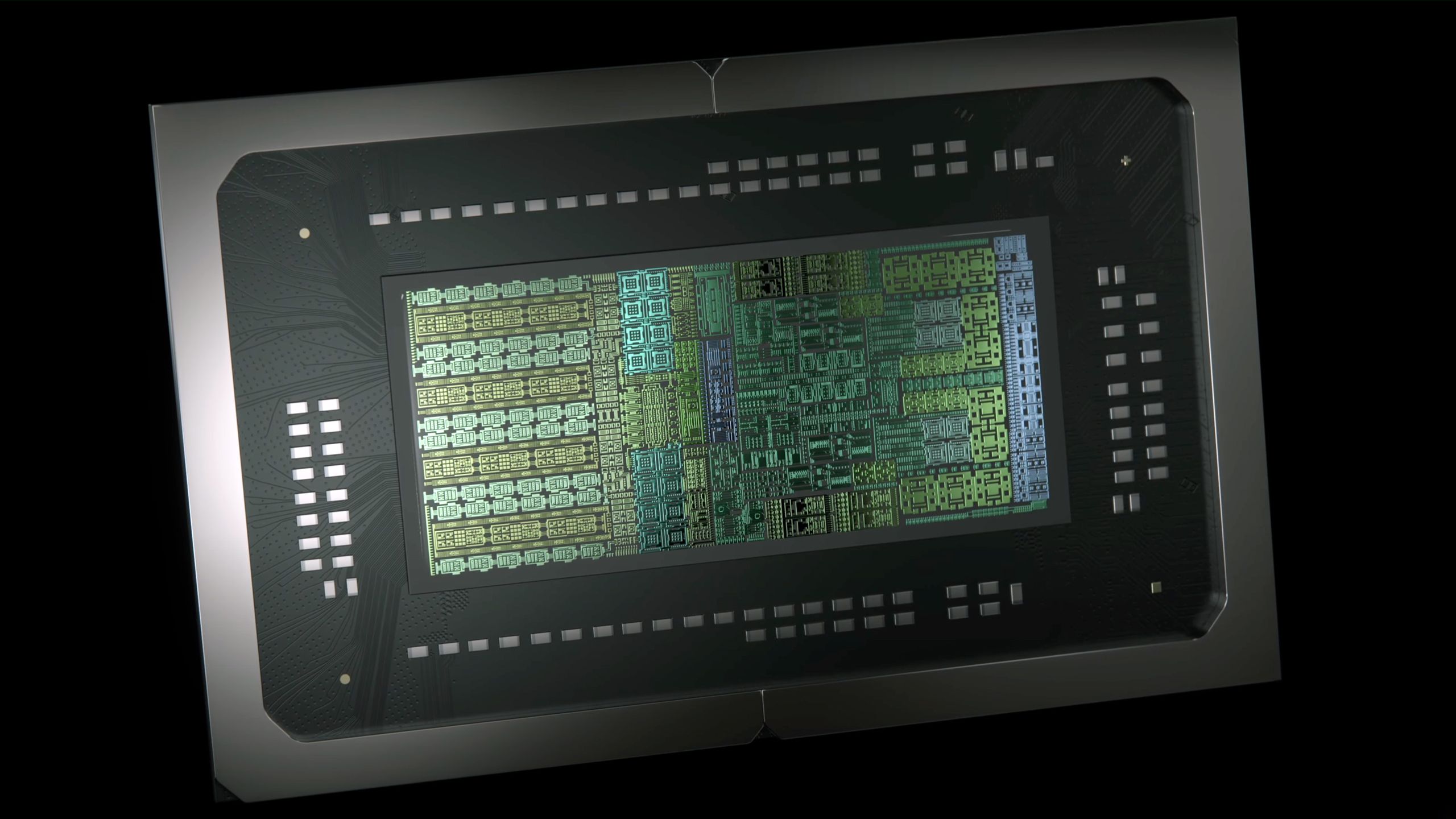

bit_user Here's how I figure these GPUs compare on paper. Note that I used the RTX 5070 as a stand-in for the GB10. Take the pixels/c and texels/c specs for AMD and Nvidia with a grain of salt, since I based them on the per-second specs and I'm just assuming they were computed against the boost clocks. GPUFP32 ALUsTexels/cyclePixels/cycleAdreno X2204812864Radeon 8060S256016064RTX 5070614417178 Reply

abufrejoval bit_user said: And if that weren't enough, it will feature a rather large chunk of SRAM. As large iGPUs tend to be rather bottlenecked by memory bandwidth, these changes could result in a decent step up, for the X2. You can see more published details of the X2's GPU (and the rest of it), here: https://chipsandcheese.com/p/qualcomms-snapdragon-x2-elite That caught my attention, too, a full frame buffer in on-die SRAM! That ought to do a lot of good for gaming performance… …if you can actually hide that from the game designers via the engine. And that's where I'd be sceptical, at least until proven otherwise, that you'd be able to hide this pretty fundamental paradigm difference vs. non-tiled GPU architectures. And even if a game engine will then low-level support it, but requires adjusting the game high-level to take advantage of it, I'm not very hopeful it would find a lot of adopters, just judgine by the various DLSS alternatives, that see little use. And that's a very small change in comparison. bit_user said: There needs to be a market, in order for such products to make sense. Until a market gets established for ARM-based gaming laptops, I think you won't see any with dGPUs. The technical hurdles for dGPU support on ARM are rather low, but I agree that it makes very little sense commercially. You aim for ARM to get battery run-time and dGPUs are at best about luggable workstations where ARM doesn't have an inherent advantage, yet: not sure, how much Nvidia wants to get it there. Of course with a modular setup like a Framework laptop, that should just be a matter of picking the parts… Reply

bit_user abufrejoval said: I guess if you're Nvidia you can afford to delay gen1 for a few years and then anounce gen2 for just a year later… It's only launching 3 quarters after initially rumored. The second gen is launching about 6 quarters after that. So, a 1.5 year gap, instead of >= 2. Intel often launches new laptop CPUs on an annual cadence. I don't hear you complaining about that! abufrejoval said: But who'd buy a gen1 product when it's bound to be obsolete before it's dry? Well, if it's a more finished product than the Snapdragon X laptops were at launch, then anyone who needs a new laptop this year would be a good candidate. It's usually the case with tech products that something better will come along, if you can afford to wait. Nothing unusual, in that regard. Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/cpus/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/cpus/nvidias-arm-based-n1x-equipped-gaming-laptops-are-reportedly-set-to-debut-this-quarter-with-n2-series-chips-planned-for-2027-new-roadmap-leak-finally-hints-at-consumer-release-windows-on-arm-machines#main

- https://www.tomshardware.com

- Developer patches Wine to make Photoshop 2021 & 2025 run on Linux — Adobe Creative Cloud installers finally work thanks to HTML, JavaScript and XML fixes

- Floppy disk drive converted into smart TV remote for kids — dev’s toddler inserts vividly labeled floppies to watch his favorite shows

- Microsoft CEO says AI needs to have a wider impact or else it risks quickly losing ‘social permission’ — also says that the technology should benefit more peopl

- Japanese city deploys anti-bear drones as 'human casualties at an all-time high' — non-lethal spray can be delivered with 10cm accuracy radius, 1km range

- Nvidia accused of trying to cut a deal with Anna’s Archive for high‑speed access to the massive pirated book haul — allegedly chased stolen data to fuel its LLM

Informational only. No financial advice. Do your own research.