The AI HAT+ 2 did a decent job of writing quick and concise code, but it was doomed to failure as it never called an application to open the image, rather it read the image and then closed the open image file.

import os

def display_image(image_path): try: # Open the image file in read mode. img = open(image_path, 'r') img.seek(0) img.read() img.close() except IOError: print('Image file not found:', image_path)

image_path = input('Enter the path to image file: ')

display_image(image_path) The Arm CPU response looked decent, but on closer inspection, it was full of errors around using the imported tkinter module and calling “Image.open” when there is no imported Image module. This vibe coding experience produced a long-winded response, but ultimately it was way off the mark and not something that we would rely on as a coding co-pilot or a sounding board for a project.

import os

from tkinter import *

# Ask user to enter path to image file

path = input("Enter absolute path to image file: ")

# Load image from disk (assuming this is a JPEG)

img = Image.open(path)

# Resize image if needed

width = 1000

height = img.height / img.width * width

img = img.resize((width, height))

# Create new canvas for the resized image

canvas = Canvas(width=500, height=300, bg="white")

canvas.pack()

# Draw image on canvas and place it in center

image_data = ImageData(img)

canvas.create_image(250, 150, anchor=CENTER, data=image_data)

root.mainloop() In our tests, the AI HAT+ 2 was faster than the Raspberry Pi 5’s Arm CPU, but more importantly, it ran the code without hogging the CPU. This is great for those who want to integrate AI into a GPIO-based project, like robotics. That said, the model produced inaccurate results. In the case of the coding exercise, the code would appear valid to a layman, but it was completely incorrect. If you are looking to run an LLM on a Pi, then try the Hailo-compatible models and see which one meets your needs. But be warned, the knowledge on which these models have been trained is now outdated, and from our limited testing time, we only saw incorrect responses.

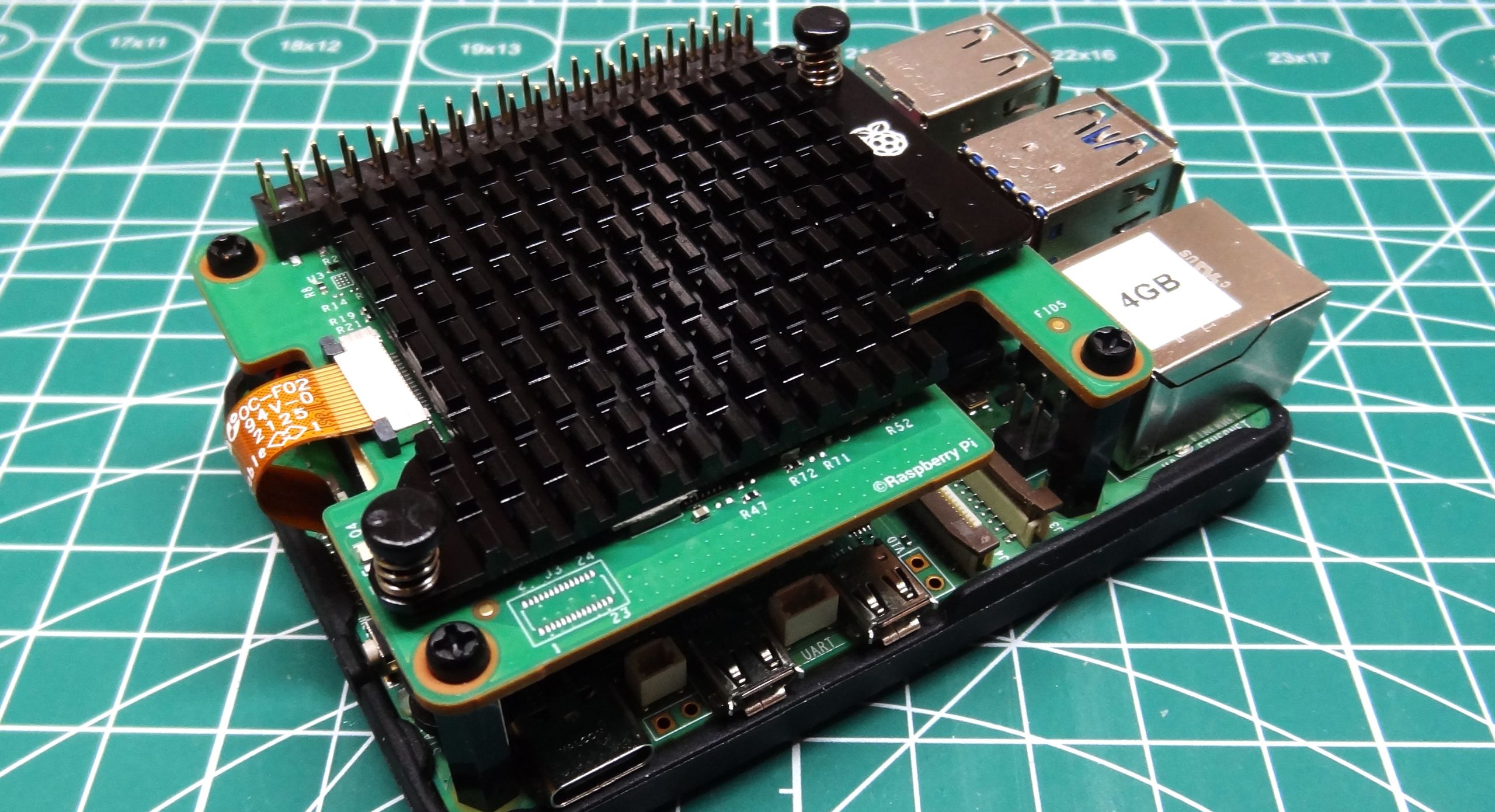

Obviously, someone who wants to use AI on a Raspberry Pi, but what type of AI? Offloading the workload from the Arm CPU to the Hailo 10H frees up the CPU for other tasks, such as running a chat server, controlling a robot, reacting to sensors, etc. So those of us who like to build smart GPIO projects will have a field day with the AI HAT+ 2.

If you are just interested in image or vision-based AI projects, the older AI HAT+, Raspberry Pi AI Camera, or the original M.2 AI Kit are all cheaper viable options. If you already have any of these products, stick with them, as right now the AI HAT+ 2 is more money for little to no performance boost. If you haven’t got any AI HATs or want to dabble with LLMs, then the AI HAT+ 2 is a viable, if currently flawed, option. Personally, we would run LLMs on the Raspberry Pi 5s Arm CPU until we have the knowledge and use case to warrant purchasing the AI HAT+ 2.

AI is the buzzword that isn’t going away, and Raspberry Pi’s adoption of AI into its product range is an interesting, if polarizing, decision. The AI HAT+ 2 continues the progression of more powerful AI platforms, and for the right type of make,r it will be a considered choice. One day. Right now, this is a solution looking for a problem, and we're sure that the bugs will be worked out, but early adopters will be left wanting more.

For many who just want to dabble with AI on their Raspberry Pi 5, then they can either use smaller models that your RAM can accommodate, or use an online service. For computer vision and image inference projects, you will get similar performance and a cheaper product with the older AI HAT+ or the Raspberry Pi AI Camera. The AI camera is a cheap entry point for learners. For those who want a local LLM in a compact and power-efficient package, the Raspberry Pi AI HAT+ 2 is something that you should research, after learning the skills and developing the project that it can support. It will also give the software time to mature and to make sure that your wallet is ready.

Les Pounder is an associate editor at Tom's Hardware. He is a creative technologist and for seven years has created projects to educate and inspire minds both young and old. He has worked with the Raspberry Pi Foundation to write and deliver their teacher training program \"Picademy\". ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-11/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Les Pounder Social Links Navigation Les Pounder is an associate editor at Tom's Hardware. He is a creative technologist and for seven years has created projects to educate and inspire minds both young and old. He has worked with the Raspberry Pi Foundation to write and deliver their teacher training program "Picademy".

bit_user Thanks for looking at accuracy, and not just timing. What's really needed is to run one of the LLM benchmark suites, so that accuracy can be scored over a statistical number of tests. Then, we'd have a rough idea of its relative accuracy vs. the CPU. I think it's incumbent on Raspberry Pi to provide accuracy data for their product running a few models + some script that we can use to try it for ourselves. It's pretty sad that they can get away with releasing such a product without even telling us how accurately it runs certain models (i.e. compared to some baseline hardware & precision). As for the hardware, even the 20 TOPS rate for int8 seems absurd to me, given that it's probably using LPDDR4X at 32-bit. It should be highly bottlenecked on memory bandwidth. A much better use of that DRAM (or hardware budget) would be simply putting it on the Pi 5 base board. BTW, Jeff Geerling ran a few more benchmarks and found the CPU often to outperform the new hat. However, I think he did not attempt to evaluate the accuracy of either's responses. He found some of the vision examples didn't run. Someone in his comments pointed out that the NVIDIA Jetson Orin Nano Super would be a much better choice (although its 8 GB of RAM is shared between CPU & GPU). Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/raspberry-pi/SPONSORED_LINK_URL

- https://www.tomshardware.com/raspberry-pi/raspberry-pi-ai-hat-plus-2-review#main

- https://www.tomshardware.com

- OpenAI CEO Sam Altman raises $252 million for brain computer interface venture — but Merge Labs is still in an early research phase

- Wikipedia is now 25 years old — world’s 7th most popular website now has over 7 million English articles and 7 billion monthly visitors

- Japan Science and Technology Agency Develops NVIDIA-Powered Moonshot Robot for Elderly Care

- Steam Machine to have fewer 'Verified' badge constraints — Valve says Verified on Steam Deck titles expected to run smoothly on upcoming PC console

- Phison demos 10X faster AI inference on consumer PCs with software and hardware combo that enables 3x larger AI models — Nvidia, AMD, MSI, and Acer systems demo

Informational only. No financial advice. Do your own research.