Luke James is a freelance writer and journalist.\u00a0 Although his background is in legal, he has a personal interest in all things tech, especially hardware and microelectronics, and anything regulatory.\u00a0 ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-19/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Luke James Social Links Navigation Contributor Luke James is a freelance writer and journalist. Although his background is in legal, he has a personal interest in all things tech, especially hardware and microelectronics, and anything regulatory.

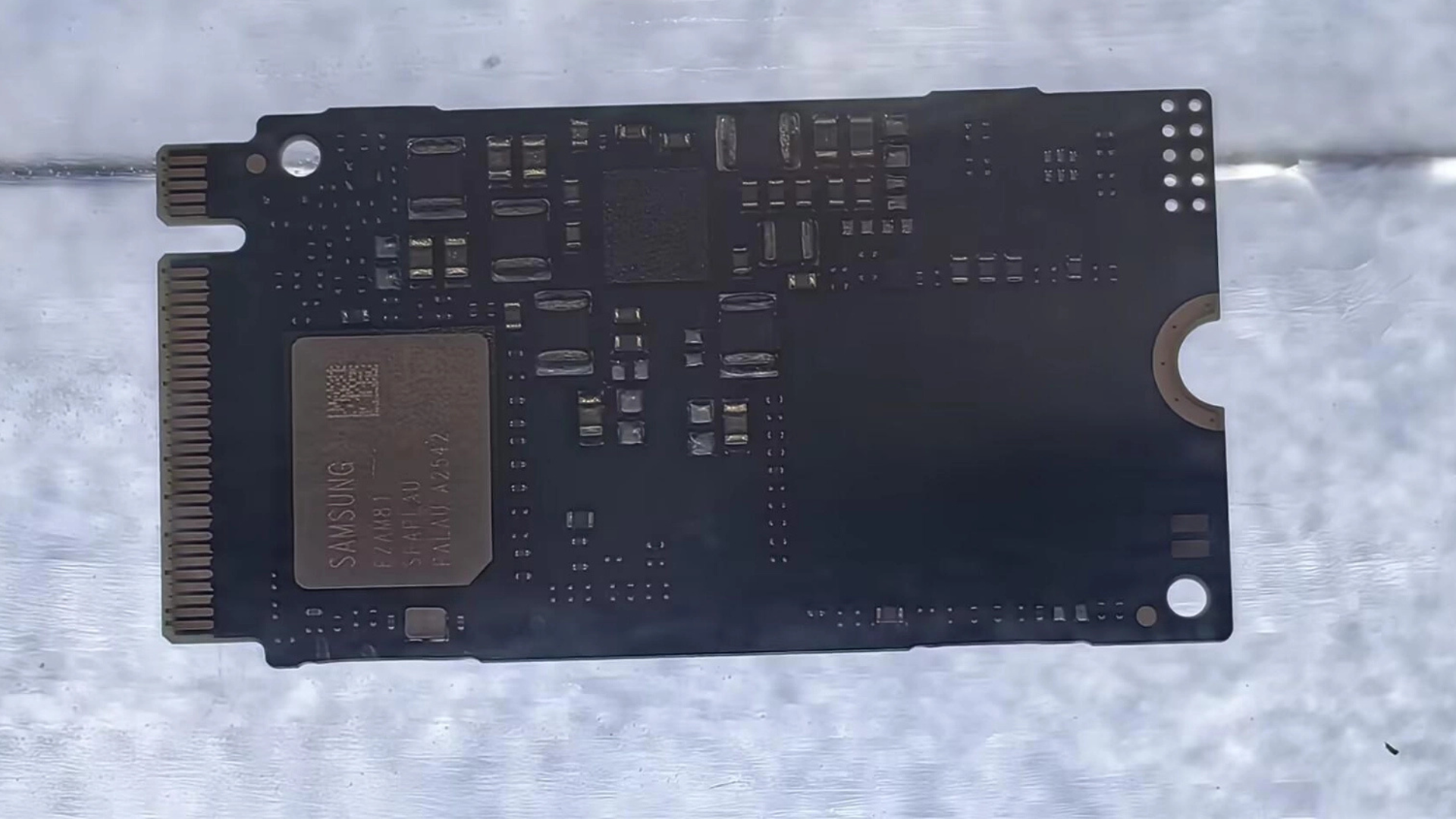

Kindaian Sorry but that has nothing to do with AI. It's just a plain SSD of generation 5. Market-speak abuse… Reply

thisisaname Samsung plans to bring the BM9K1 to market in 2027, but hasn’t disclosed any information on pricing or form factor. I am quite sure no one has any idea what pricing on storage is going to be at the end of 2026 let alone some time in 2027. Reply

qxp Kindaian said: Sorry but that has nothing to do with AI. It's just a plain SSD of generation 5. Market-speak abuse… I think what they mean is that if you memory-map AI models stored on it, you will have faster data access than on previous model. I.e. it has higher read bandwidth then before, hopefully random access. Reply

usertests Kindaian said: Sorry but that has nothing to do with AI. It's just a plain SSD of generation 5. Market-speak abuse… Are they lying? The company said the RISC-V design enables more granular firmware optimization for QLC NAND management and AI-specific I/O patterns, resulting in a 23% improvement in energy efficiency over the BM9C1. Reply

derekullo qxp said: I think what they mean is that if you memory-map AI models stored on it, you will have faster data access than on previous model. I.e. it has higher read bandwidth then before, hopefully random access. Random access doesn't really matter in this context for the storage device. Let's say you had a Geforce 5090, a raid 1 of 2 – 16 terabyte sata hard drives and a 20 gigabyte model on that raid. 2 sata drives in a raid 1 might give you a read speed of 300 megabytes per second. With a 20 gigabyte model it would take around 66 seconds to load the model into Geforce 5090's VRAM. Once the model is loaded into VRAM or normal ram if you don't want to use your GPU, the hard drives aren't used anymore until you want to load another model. If for whatever reason you switch models often a drive rated for super high read performance, 11.4 gigabytes per second easily qualifying, that same 20 gigabyte model takes about 2-3 seconds to load. Reply

derekullo usertests said: Are they lying? AI-specific I/O patterns quite literally means sequential reads … at least for loading the model into whatever type of ram you are using. In that way it is a little of Market-speak abuse. In the same way it could also be advertised as Optimized For Loading 40 Gigabyte 4k MKV's. Reply

bit_user derekullo said: Random access doesn't really matter in this context for the storage device. I think they're talking about models that are too big to fit in whatever RAM you have. With at least some LLMs, I believe you don't need to read all of the weights, for every inference. derekullo said: AI-specific I/O patterns quite literally means sequential reads … at least for loading the model into whatever type of ram you are using. According to what I said, it could actually involve lots of small random reads. How RISC-V might play into that is by allowing them to add specialize instructions to accelerate block error correction, since a random access pattern will be touching a lot more blocks. Reply

qxp derekullo said: Random access doesn't really matter in this context for the storage device. Let's say you had a Geforce 5090, a raid 1 of 2 – 16 terabyte sata hard drives and a 20 gigabyte model on that raid. 2 sata drives in a raid 1 might give you a read speed of 300 megabytes per second. With a 20 gigabyte model it would take around 66 seconds to load the model into Geforce 5090's VRAM. Once the model is loaded into VRAM or normal ram if you don't want to use your GPU, the hard drives aren't used anymore until you want to load another model. If for whatever reason you switch models often a drive rated for super high read performance, 11.4 gigabytes per second easily qualifying, that same 20 gigabyte model takes about 2-3 seconds to load. If you are using llama.cpp, for example, it memory maps model weights so that you can run 70 GB model on a computer with only 32 GB RAM. In that case solid state drive bandwidth is rather important. Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/ssds/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/ssds/samsung-announces-bm9k1-pcie-5-0-qlc-ssd#main

- https://www.tomshardware.com

- Trucker shows off $6,000 PC driving sim rig in passenger seat — driver slides over to RTX 5080-powered setup when stuck in traffic

- Hbada X7 Chair Review: AI-assisted comfort

- Win a prize by entering your build into the inaugural Tom's Hardware Rig Rundown — submit a build to get your setup evaluated by our expert staff

- Just $151 for 32GB of Corsair Vengeance DDR5-6400 RAM when bundled with Intel's new 270K-Plus and Z890-E motherboard — start your Arrow Lake refresh build for l

- Game On: Five New Titles Now Streaming on GeForce NOW

Informational only. No financial advice. Do your own research.