According to filings, SK hynix plans to operate a full mass-production line at the site, supported by a dedicated talent pipeline from Purdue. That puts it in direct competition with TSMC’s CoWoS platform, which has been the de facto standard for high-end HBM packaging since Nvidia’s Pascal era. And with TSMC’s CoWoS capacity effectively sold out through 2027 , customers are already searching for alternatives.

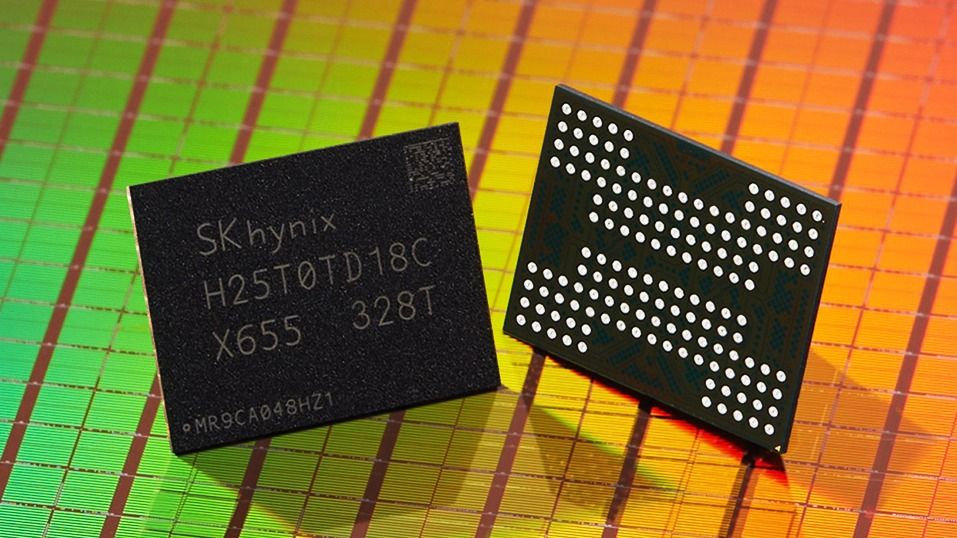

The biggest problem with HBM is that it’s a packaging challenge. HBM stacks multiple memory dies vertically using through-silicon vias (TSVs), all of which must be mounted on a large interposer next to a host processor. That assembly must account for thermal expansion, routing complexity, and thousands of microbumps, resulting in a tightly coupled chiplet module with massive I/O bandwidth and low power draw, ideal for AI training or HPC workloads.

Until now, HBM suppliers like SK hynix and Samsung have typically sold raw memory stacks, leaving GPU vendors to rely on foundry partners for packaging. Nvidia’s H100 and AMD’s MI300X, for instance, use HBM2e and HBM3 mounted via TSMC’s CoWoS process. But with demand for accelerators reaching historic highs — and HBM4 promising even more aggressive stack designs — the need for in-house packaging has become a priority.

SK hynix’s stated goal is to deliver a “turnkey” solution: HBM stacks already integrated with silicon interposers and, potentially, host dies from customers. That would allow hyperscalers or chip designers to skip TSMC entirely for final assembly, receiving ready-to-mount modules instead. It’s a fundamental shift in how HBM enters the supply chain, positioning SK hynix as a full-stack supplier rather than a component vendor.

This type of setup already has some precedent. TSMC has steadily expanded its role from foundry to integrator over the past decade, using its packaging platforms (CoWoS, InFO, SoIC) to create customer lock-in beyond wafer fabrication. SK hynix has obviously drawn inspiration from that playbook, starting from memory and working outward. It also places pressure on Samsung, which is reportedly evaluating its own U.S. packaging line to support future Tesla and AMD accelerator deployments .

The timing of all this is no accident. HBM demand is projected to be worth tens of billions by 2030 , driven by AI model scaling and architectural shifts toward high-bandwidth chiplets. Nvidia’s Rubin platform — expected to launch late 2026 — will reportedly use HBM4E stacks with bandwidth over 1.2TB/s per module. That’s not achievable with standard memory interfaces or traditional DRAM. If SK hynix can offer a high-yield, pre-packaged solution at scale, it could become indispensable to Nvidia, AMD, and a whole host of other companies.

Advanced packaging has become a national security and industrial policy priority, and Washington is funding it accordingly. The Commerce Department’s CHIPS for America program set aside about $3 billion for the National Advanced Packaging Manufacturing Program to expand U.S. advanced packaging R&D and manufacturing capacity under the Biden administration in 2023, which remains under the incumbent Trump administration.

Micron plans $9.6 billion HBM fab in Japan as AI memory race accelerates

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/sk-hynix-to-build-first-us-2-5d-packaging-plant-for-hbm#main

- https://www.tomshardware.com

- UC San Diego Lab Advances Generative AI Research With NVIDIA DGX B200 System

- Nvidia's CUDA Tile examined: AI giant releases programming style for Rubin, Feynman, and beyond — tensor-native execution model lays the foundation for Blackwel

- Finnish authorities seize ship and crew after undersea cable cut, pursuing criminal charges — Finnish special forces board ship, detain all 14 crewmembers

- Grab this $139 Razer BlackWidow V4 wireless gaming keyboard at a record low price — save $60 on this low-profile TKL board with huge battery life

- ID-Cooling FX360 LCD Review: Quiet, cool, and… why is this screen so small?

Informational only. No financial advice. Do your own research.