Luke James is a freelance writer and journalist.\u00a0 Although his background is in legal, he has a personal interest in all things tech, especially hardware and microelectronics, and anything regulatory.\u00a0 ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-22/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Luke James Social Links Navigation Contributor Luke James is a freelance writer and journalist. Although his background is in legal, he has a personal interest in all things tech, especially hardware and microelectronics, and anything regulatory.

IntelUser2000 GPU based on open source? I'm all for it. But don't expect a 12nm chip to be significantly faster if it moves to say 5nm. When people say Moore's Law is dying, they mean that traditional just porting to new node and benefitting era is gone. It requires significant work to get the full advantages of a new node. Nvidia/AMD has already done the hard work and know this. It's very unlikely a startup can take full advantage of the newer nodes like established players do. Even if you have a potential winner, the actual winner is determined by the details, such as optimizing and fine tuning the thing. And of course timing is important as fine tuning and optimization is potentially half the work, and can throw off your schedule. Oftentimes the "traditional" ones continue on because they keep making new products like clockwork, whereas a startup's advantages are lost due to delays, which Bolt graphics is already suffering with the delay to 2027. Reply

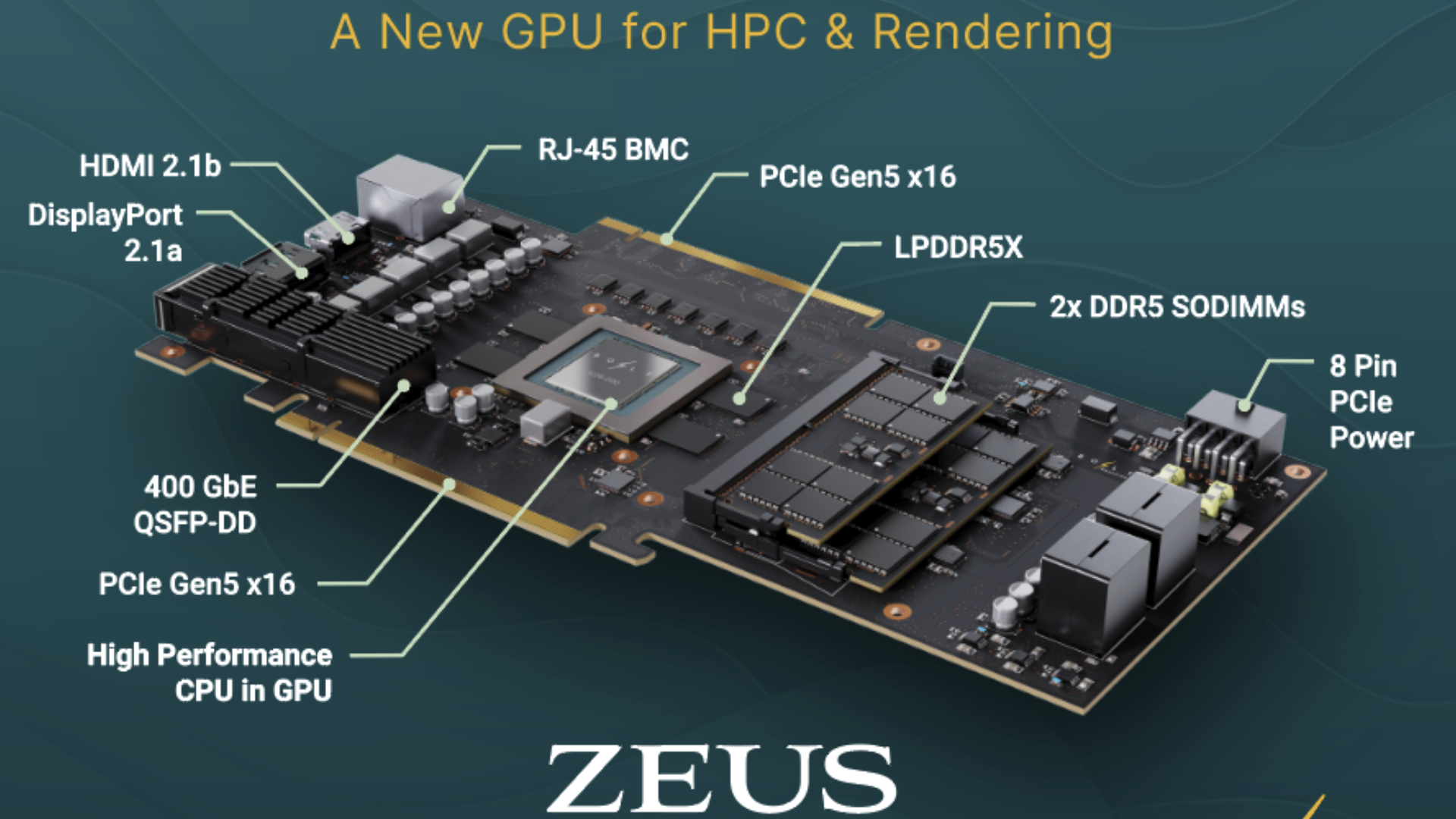

bit_user The article seems to lack a source link. I'm guessing it's based on this press release: https://www.prnewswire.com/news-releases/bolt-graphics-completes-tape-out-of-test-chip-for-its-high-performance-zeus-gpu-a-major-milestone-in-reducing-computing-costs-by-17x-302750442.html IntelUser2000 said: GPU based on open source? I'm all for it. Huh? Specifically what is the "open source" claim and where did you see it? IntelUser2000 said: But don't expect a 12nm chip to be significantly faster if it moves to say 5nm. Nvidia's RTX 2000 was built on a 12nm node; RTX 4000 was built on a N5-family node. Want to guess which one is faster? It's obviously not going to be the exact same chip on N5 that they're taping out out 12 nm. The 5 nm version will certainly be scaled up and running at higher clock speeds. IntelUser2000 said: Even if you have a potential winner, the actual winner is determined by the details, such as optimizing and fine tuning the thing. And of course timing is important as fine tuning and optimization is potentially half the work, and can throw off your schedule. Oftentimes the "traditional" ones continue on because they keep making new products like clockwork, whereas a startup's advantages are lost due to delays, which Bolt graphics is already suffering with the delay to 2027. Yeah, time-to-market is a serial killer of promising chip startups. By the time Bolt gets a chip to market that's on a remotely competitive node, they'll have missed their market window. Even if their current tech is competitive against a RTX 5090, their final production silicon will have to face a RTX 6090 (or later). Same story repeats itself over and over. That's why it's so hard to displace the big CPU and GPU makers. Reply

thestryker bit_user said: Yeah, time-to-market is a serial killer of promising chip startups. By the time Bolt gets a chip to market that's on a remotely competitive node, they'll have missed their market window. Even if their current tech is competitive against a RTX 5090, their final production silicon will have to face a RTX 6090 (or later). Honestly they're fine (with this timeline) for the market they're aiming at. There's no chance nvidia is going to design anything to compete as long as the ai money is flowing. I think the biggest problem they're facing (at least on the non-HPC markets) is going to be the software side of things. For example with rendering it wouldn't matter if they were double the speed of whatever is released at the time without rock solid software. None of this is to say that sooner isn't better, because it absolutely is, just that their niche is pretty safe as long as they don't stumble. Reply

bit_user thestryker said: Honestly they're fine for the market they're aiming at. There's no chance nvidia is going to design anything to compete as long as the ai money is flowing. Nvidia will eventually release RTX 6090 (and the corresponding workstation cards). Contrary to popular belief, Nvidia hasn't stopped development on non-AI software. Last year, they updated their non-realtime raytracing library to take advantage of the Shader Execution Reordering (SER) in the RTX 5090: https://developer.nvidia.com/rtx/ray-tracing/optix Reply

thestryker bit_user said: Nvidia will eventually release RTX 6090 (and the corresponding workstation cards). Do you really think the 6090 is going to have a minimum double the RT capability of the 5090? I sure don't. There's too many other things that nvidia needs their parts to do that Bolt isn't even looking at. Reply

bit_user thestryker said: Do you really think the 6090 is going to have a minimum double the RT capability of the 5090? I sure don't. Sure, I could see they pulling out another 2x in RT performance, between efficiency enhancements, frequency, and more SMs. Don't forget that they'll be on a new node. Also, they're continuing to enhance ReSTIR: https://research.nvidia.com/labs/rtr/publication/lin2026restirptenhanced/ thestryker said: There's too many other things that nvidia needs their parts to do that Bolt isn't even looking at. They're a big company. They can walk and chew gum, at the same time. Now that they have Groq, they aren't as dependent on their client GPUs for inference acceleration. https://www.nvidia.com/en-us/data-center/lpx/ Reply

thestryker bit_user said: Also, they're continuing to enhance ReSTIR Yeah I know that's why I said 2x instead of more. bit_user said: Sure, I could see they pulling out another 2x in RT performance, between efficiency enhancements, frequency, and more SMs. Don't forget that they'll be on a new node. That would be a first since Turing to Ampere, but I like your optimism. Reply

bit_user thestryker said: That would be a first since Turing to Ampere, but I like your optimism. No, both Ada and Blackwell had further improvements to the RT cores. That's why Nvidia still leads on RT performance, in spite of all RDNA4's improvements, here. I'm sure they're not done, yet. Reply

thestryker bit_user said: No, both Ada and Blackwell had further improvements to the RT cores. Of course they did, and I didn't say any different. I said they didn't improve them as much as that first gen on gen. If you look at relative performance in games the 3090 Ti sits between the 4070 Ti and 4070 Ti Super in raster/rt/pt and has ~1/3 more RT cores. It's somewhat hard to mix in the 50 series to that comparison because the 5070 is slower and has even fewer RT cores and the 5070 Ti is a lot faster card. Comparing the 4080 and 5070 Ti though has the two very close in raster, and the gap is slightly larger in rt/pt but close enough I think it's fair to call it the same there too. The 4080 has ~8.5% more RT cores so there's definitely an improvement on the 50 series side, but it seems lower than the 30 to 40 series was. I wish there were detailed numbers for the 2080 Ti to look through but I only know of TPU having tested it and they don't seem to have updated it since the 5090 launch (no PT tests). The percentage performance in that review versus the 3070 (have to use 1080p only here due to VRAM) is fairly similar across the board, but the 2080 Ti has just under 50% more RT cores. I don't think there's any question as to whether or not nvidia has the best RT hardware. They would just need to dedicate more silicon to it if this was something they wanted to compete with and I don't think Bolt is enough of a threat even if they hit their launch window. I would bet that if they do hit their milestones and are able to deliver this level of performance that Feynman would probably address it (I'm assuming 60 series will be Rubin based) though. Reply

bit_user thestryker said: I don't think Bolt is enough of a threat even if they hit their launch window. I would bet that if they do hit their milestones and are able to deliver this level of performance that Feynman would probably address it (I'm assuming 60 series will be Rubin based) though. Bolt is definitely not on their radar. The main reason to further increase their RT capability would be simply to continue their push into the realm of Path Tracing and further distance themselves from AMD and Intel. Perhaps also to give owners of previous generation NV hardware more reasons to upgrade. Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/semiconductors/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/semiconductors/bolt-graphics-tapes-out-its-first-zeus-gpu-test-chip-on-tsmc-12nm#main

- https://www.tomshardware.com/subscription

- Game On: Five New Titles Now Streaming on GeForce NOW

- The Future of AI Is Open and Proprietary

- Xbox outlines broad plan to revitalize brand with a back-to-basics approach that focuses on console — New Xbox strategy reprioritizes console, while bolstering

- Linux may be ending support for older network drivers due to influx of false AI-generated bug reports — maintenance has become too burdensome for old largely-un

- Iran claims US exploited networking equipment backdoors during strikes — says devices from Cisco and others failed despite blackout in attack that 'indicates de

Informational only. No financial advice. Do your own research.