For enthusiasts, the two SPEC benchmarks that are thrown around a lot are SPECviewperf for graphics performance and SPEC Workstation, the latter of which we run in our CPU reviews. SPEC CPU is focused on the CPU, but it’s a suite targeted toward servers more than the best CPUs for gaming .

The updated suite includes 52 tests, nine more than what was included in SPEC CPU 2017, with more than twice as many lines of code. That’s a big part of what SPEC does. The suite uses real applications, but those applications are modified in various ways to fit the benchmark suite. One of the main focuses, according to SPEC’s technical paper, is ensuring deterministic results from each application, which means removing sources of non-determinism. As an example, the technical paper describes replacing the std::sort function in C++ with std::stable_sort.

You may like CPU Benchmarks and Hierarchy 2026: CPU Rankings Behind the scenes of our massive CPU retest for Bench — testing at 1080p, choosing new apps, and gathering data for a decade of CPUs Go beyond the review with Bench, the deepest consumer hardware benchmarking database on the internet “The fundamental goal is to ensure that the benchmark executes an identical amount of user-space work across any compliant system, and produces an identical result on every run within a given tolerance. To achieve this level of rigor, each candidate benchmark undergoes a series of modifications,” the technical paper reads.

In addition to removing non-determinism, SPEC modifies applications to be portable, ensuring everything is written in C, C++, or Fortran, and it focuses on user-space execution. According to the paper, SPEC’s target was at least 95% of execution time happening within the user-space code of the benchmark, minimizing the influence of the operating system.

SPEC spent a little over three years (Feb. 2020 to Mar. 2023) gathering candidates for the new suite. It came out with 70 candidate applications, 38 of which made it through SPEC’s CPU committee. Again, determinism made a difference when choosing the applications that made it through, as the committee tried to avoid “minor architectural or compiler differences [that] can lead to ‘short-cuts’ to the solution.” There are a few specific applications that made it deep in evaluation but ultimately weren’t included, the technical paper clarifies.

Key among them were modern AI workloads like llama.cpp and whisper.cpp. The technical paper says “restricting them to portable C++ codepaths (with intrinsics removed) caused a fundamental divergence from their real-world behavior,” ultimately disqualifying them. SPEC also avoided the AV1 and Opus codecs to avoid any claims of bias given SPEC committee members — the committee is comprised of representatives from Intel, AMD, IBM , Arm, Nvidia, Dell , HPE, Ampere, and others.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/cpus/SPONSORED_LINK_URL

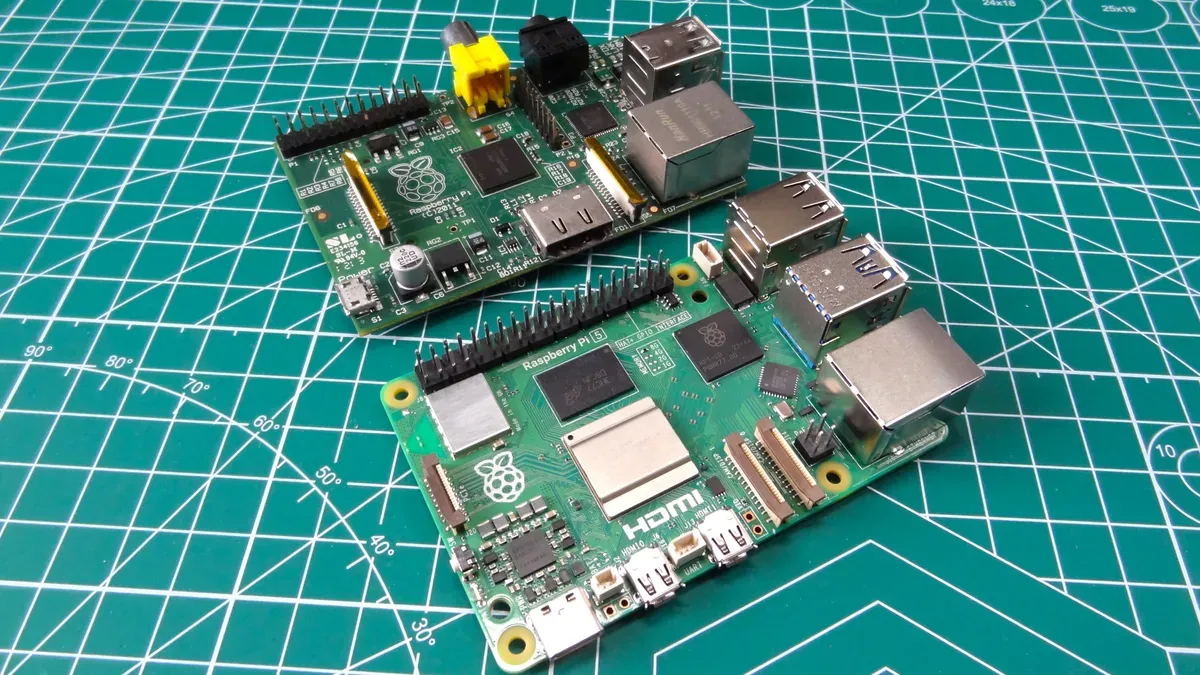

- https://www.tomshardware.com/pc-components/cpus/new-server-focused-spec-cpu-2026-benchmarking-suite-has-results-for-a-raspberry-pi-5-updated-tools-feature-more-tests-and-can-run-a-wide-range-of-systems#main

- https://www.tomshardware.com

- China pushes for 70% homegrown silicon wafer use as domestic firm ramps up 12-inch production — government seeking to localize critical chip supply chain amid A

- Inland QN450 1TB SSD Review: Maximum efficiency, minimum spend

- Rethinking AI TCO: Why Cost per Token Is the Only Metric That Matters

- OpenAI’s New GPT-5.5 Powers Codex on NVIDIA Infrastructure — and NVIDIA Is Already Putting It to Work

- NVIDIA and Google Cloud Collaborate to Advance Agentic and Physical AI

Informational only. No financial advice. Do your own research.