Memory shortages are killing off older Jetson modules while newer ones compete for the same constrained supply.

When you purchase through links on our site, we may earn an affiliate commission. Here’s how it works .

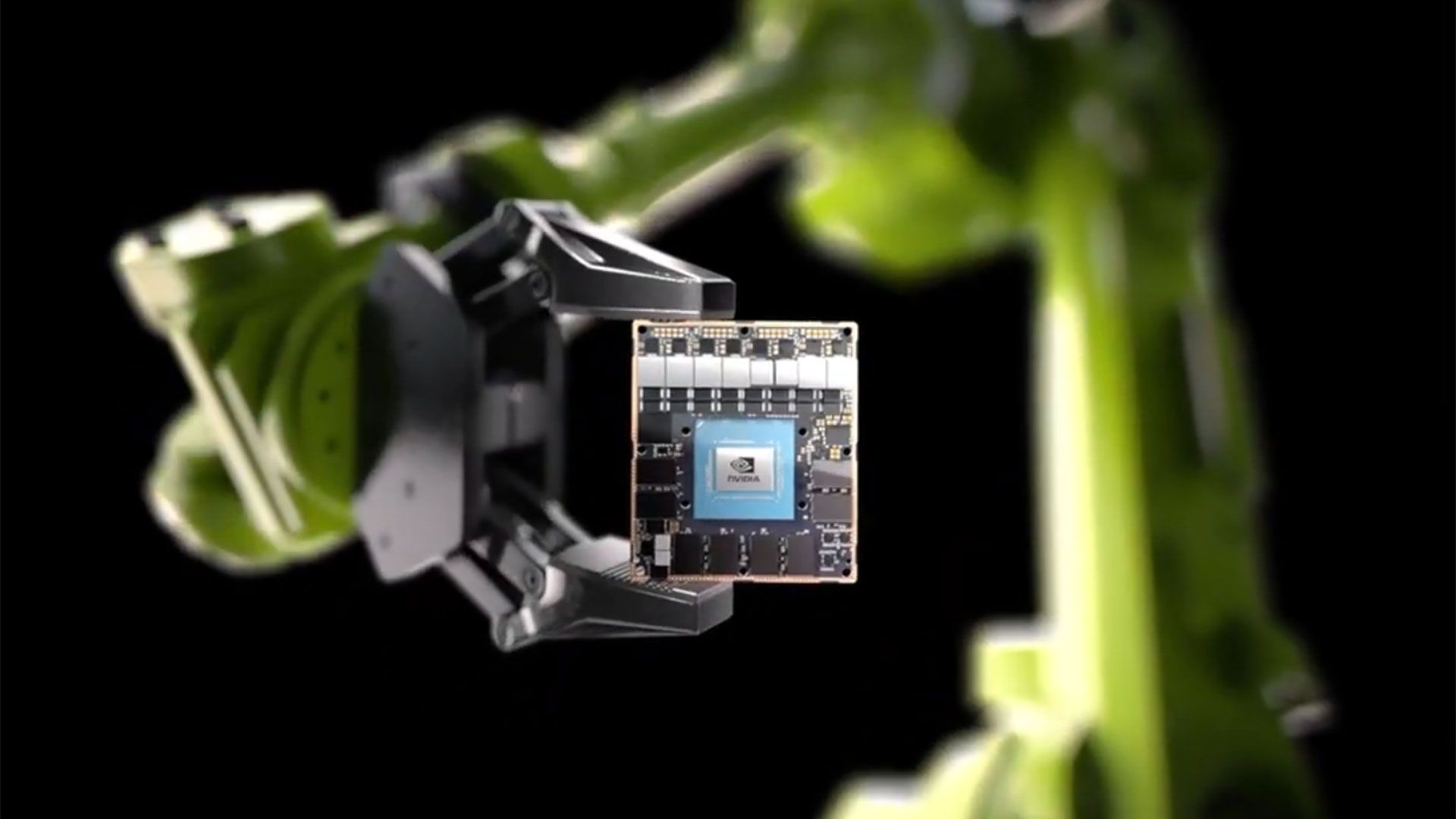

Nvidia's Jetson Thor robotics platform, released last August, is built on the Blackwell GPU architecture and fabricated on TSMC's 3nm process. The top-end T5000 module delivers 2,070 FP4 TFLOPS with 128 GB of LPDDR5X memory, while a lower-cost T4000 variant introduced at CES 2026 offers 1,200 FP4 TFLOPS with 64 GB at $1,999 per unit in volume. Both use Arm Neoverse-V3AE CPU cores and LPDDR5X sourced from Samsung or SK hynix.

These modules compete for TSMC 3nm wafer starts alongside Blackwell data center GPUs. Partners, including Boston Dynamics and Amazon Robotics, are building on the platform, and LG has confirmed that it’s “exploring a strategic collaboration in physical AI,” with Nvidia, including the robotics ecosystem, Bloomberg reported. Nvidia's DRIVE AGX Thor automotive SoC is another Blackwell-based product line competing for the same 3nm wafer capacity.

The same memory market dynamics feeding Nvidia's newer physical AI products are simultaneously killing off its older ones. At the end of April, it was reported that Nvidia has accelerated end-of-life timelines for its Jetson TX2 and Xavier modules because LPDDR4 supply has become too constrained to maintain production. Samsung has moved away from LPDDR4 manufacturing , and AI-driven demand has redirected memory capacity toward higher-margin products.

That forces Jetson customers onto Orin or Thor modules, which use LPDDR5X from the same Asian memory suppliers whose capacity is already stretched by HBM and data center DRAM demand. TSMC's CoWoS advanced packaging for data center GPUs is growing at an 80% compound annual growth rate, TSMC's head of North American packaging told CNBC last month, and chips fabricated at TSMC's Arizona Fab 21 still ship back to Taiwan for packaging.

Nvidia committed to $500 billion in U.S. server manufacturing last year, with Foxconn and Wistron, and Amkor and SPIL are building advanced packaging facilities in Arizona. But those operations are not yet at production scale, and physical AI product lines are widening the range of components sourced from Asia faster than domestic capacity can absorb them.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/artificial-intelligence/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/artificial-intelligence/nvidias-asian-supply-chain-hits-90-percent-of-production-costs#main

- https://www.tomshardware.com

- NVIDIA Launches Nemotron 3 Nano Omni Model, Unifying Vision, Audio and Language for up to 9x More Efficient AI Agents

- New Adobe Premiere Color Grading Mode Accelerated on NVIDIA GPUs

- Save a massive $1,120 on this HP OLED gaming laptop with an RTX 5080 right now — Omen Max 16 rig ships with a 24-core Intel CPU, 32GB DDR5, 2TB SSD, and a 16-in

- Anthropic in early talks to buy DRAM-less AI inference chips from UK startup — Fractile's SRAM architecture reduces need for pricey memory during extreme pricin

- Nvidia accelerates end-of-life for some Jetson AI processors due to memory shortages — RAMpocalypse sends older DDR4-based modules to the great scrapheap in the

Informational only. No financial advice. Do your own research.