Anton Shilov is a contributing writer at Tom\u2019s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends. ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-23/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Anton Shilov Social Links Navigation Contributing Writer Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

FunSurfer Seems that PCIe 8.0 will still have some connectivity issues… maybe it is best to wait for the optical fiber PCIe 9.0… Reply

DS426 I'm guessing it'll get a naming rebrand when it goes to a physical optical bus. Any guesses? Reply

hotaru251 i'd assume they just adopt a fiber connector that plugs into card while allowing conenctor to work w/o the fiber bit thus allowing compatibility even if at reduced spd. Reply

Li Ken-un hotaru251 said: a fiber connector that plugs into card while allowing conenctor to work w/o the fiber bit Every connection introduces noise and signal integrity issues. The adapter itself will certainly degrade the signal. Reply

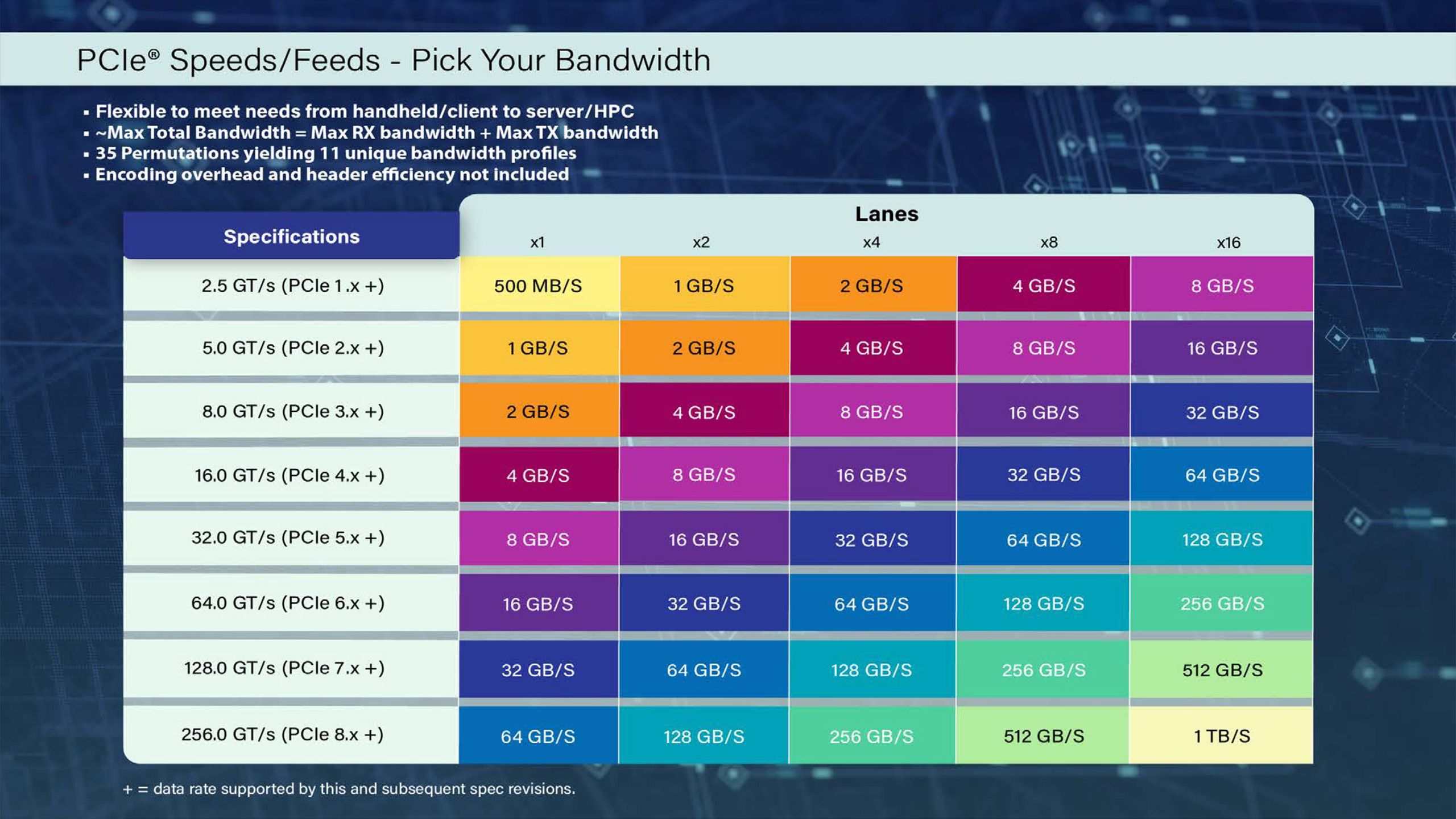

bit_user First, the chart in full-res: And now we can clearly see that they're doing that ridiculous thing of summing the bandwidth in both directions. I say it's ridiculous, because most workloads are bottlenecked in only one direction, not both. Also, most modern interconnects are bidir (or dual-simplex), so it almost goes without saying that you get the same non-interfering bandwidth in both directions. Anyway, one aspect I find interesting is how much (uni-dir) bandwidth a single x4 link has. At PCIe 6.0 / CLX 3.0, that number (32 GB/s) starts to become very interesting for using CXL DIMMs. It's starting to approach the number you get with a regular DIMM. Reply

MobileJAD Li Ken-un said: Every connection introduces noise and signal integrity issues. The adapter itself will certainly degrade the signal. Imagine if it were possible to just plug fiber directly into the CPU where its pcie controller is and the other end to whatever you want to connect, like a nvme device. I am of course being somewhat sarcastic about how crazy tech is getting now and understand this stuff is purely going to be in a enterprise environment, but damn does it feel like things are being advanced towards their limits here. Reply

bit_user MobileJAD said: Imagine if it were possible to just plug fiber directly into the CPU where its pcie controller is and the other end to whatever you want to connect, like a nvme device. It's coming sooner than you think. Not to your desktop, of course, but in AI servers. kS8r7UcexJU View: https://www.youtube.com/watch?v=kS8r7UcexJU More: https://www.tomshardware.com/networking/nvidia-outlines-plans-for-using-light-for-communication-between-ai-gpus-by-2026-silicon-photonics-and-co-packaged-optics-may-become-mandatory-for-next-gen-ai-data-centers Reply

Geef Yep, pretty soon it's gonna be plugging yourself in. 🔌:whistle: Motoko Kusanagi Gif file. Reply

Jame5 Honestly, I want desktop CPUs to have maybe 4-8 lanes of this. Then it can break out to lower spec PCIe lanes, giving us FAR more lanes than we currently get. Imagine a CPU with 8 lanes of PCIe 8.0, that would give us the equivalent of of 64 lanes of PCIe 5.0 connectivity to play with. Or 128 lanes of PCIe 4.0. I'm kind of annoyed with 20 lanes of PCIe + however many half-quality lanes from the PCH/southbridge. Reply

usertests Jame5 said: Honestly, I want desktop CPUs to have maybe 4-8 lanes of this. I won't bet on us ever seeing PCIe 7.0 in desktops, much less 8.0: https://www.tomshardware.com/pc-components/ssds/pcie-6-0-ssds-for-pcs-wont-arrive-until-2030-costs-and-complexity-mean-pcie-5-0-ssds-are-here-to-stay-for-some-time "You will not see any PCIe Gen6 until 2030," Kuo said. "PC OEMs have very little interest in PCIe 6.0 right now — they do not even want to talk about it. AMD and Intel do not want to talk about it." At 16 GT/s (PCIe 4.0), traces can reach up to 11 inches with a 28 dB loss budget, but at 64 GT/s (PCIe 6.0), this drops to 3.4 inches with a 32 dB budget, depending on PCB materials and conditions, according to an Astera Labs presentation. It sounds like PCIe 6.0 support could be brought alongside AM6 around 2030 (Zen 6 and Zen 7 will be on AM5). Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/pci-sig-reveals-pcie-8-0-0-5v-spec-first-draft-includes-1-tb-s-bandwidth-and-new-connector-technology#main

- https://www.tomshardware.com/subscription

- Now 15% off, Razer's fantastic Basilisk V3 Pro wireless mouse with 30K DPI sensor is reduced to clear in this limited deal — 30K DPI sensor, optical switches, a

- Google, Microsoft, and xAI agree to let US government test AI models before public release — OpenAI and Anthropic also on board after renegotiating deals with W

- National Robotics Week — Latest Physical AI Research, Breakthroughs and Resources

- NVIDIA Spectrum-X — the Open, AI-Native Ethernet Fabric — Sets the Standard for Gigascale AI, Now With MRC

- Survey shows that nearly half of Americans don't want new data centers built near their homes — 47% oppose the construction of new AI data centers in their neig

Informational only. No financial advice. Do your own research.