Even though the V100 proved much more efficient overall, despite being several generations old, its idle power draw is the real crux. It sips 45W just sitting doing nothing, compared to 35W on the RTX 3060. Finally, the YouTuber also tested Frigate NVR, which ended up performing really well on the V100, better than the RTX 3060, but consumed more power, as you'd expect.

The host's previous setup for Frigate was an Intel-based N100 mini PC that struggled to ever detect his dog on mobilenetv2, but the V100 was able to identify it instantly. Monitoring just two cameras made the V100 pull over 100W, though; the RTX 3060 was similar in this regard, while the older N100 consumed only 26W when operating six different cameras. That marks the end of the benchmarking.

This V100 experiment turned out to be a success overall, but the virality of the original video and the fact that we're writing this article mean these bad boys are about to go up in price. So, if you're interested, make sure to grab one before it's too late; the YouTuber found it for just $100 on eBay, and the PCIe adapters for early SMX sockets are cheap enough as well. The 32GB variant of the V100 goes for $500, however.

Follow Tom's Hardware on Google News , or add us as a preferred source , to get our latest news, analysis, & reviews in your feeds.

Hassam Nasir is a die-hard hardware enthusiast with years of experience as a tech editor and writer, focusing on detailed CPU comparisons and general hardware news. When he\u2019s not working, you\u2019ll find him bending tubes for his ever-evolving custom water-loop gaming rig or benchmarking the latest CPUs and GPUs just for fun. ","collapsible":{"enabled":true,"maxHeight":250,"readMoreText":"Read more","readLessText":"Read less"}}), "https://slice.vanilla.futurecdn.net/13-4-23/js/authorBio.js"); } else { console.error('%c FTE ','background: #9306F9; color: #ffffff','no lazy slice hydration function available'); } Hassam Nasir Social Links Navigation Contributing Writer Hassam Nasir is a die-hard hardware enthusiast with years of experience as a tech editor and writer, focusing on detailed CPU comparisons and general hardware news. When he’s not working, you’ll find him bending tubes for his ever-evolving custom water-loop gaming rig or benchmarking the latest CPUs and GPUs just for fun.

bit_user The article said: modded Tesla V100 SMX data center GPU runs AI LLMs and is more efficient than many modern midrange offerings in AI inference "Modern"? The RTX 3060 launched more than 5 years ago. The RDNA 3 cards launched in 2022, although the RX 7800 XT got delayed for a year, while AMD waited for inventories of RDNA 2 cards to draw down. The real value of V100 is actually in their fp64 performance, which you still cannot match with consumer hardware. IMO, it's a "waste" to use them for AI, which they're not particularly good at and can easily be surpassed by something just a little newer or higher-end than what the subject tried. BTW, the V100's driver is now in legacy support mode, which means that it won't support newer versions of CUDA that newer versions of popular deep learning frameworks will eventually start requiring. Anyone buying a V100 should go in with eyes open that your window for using one with up-to-date software is somewhat limited. Reply

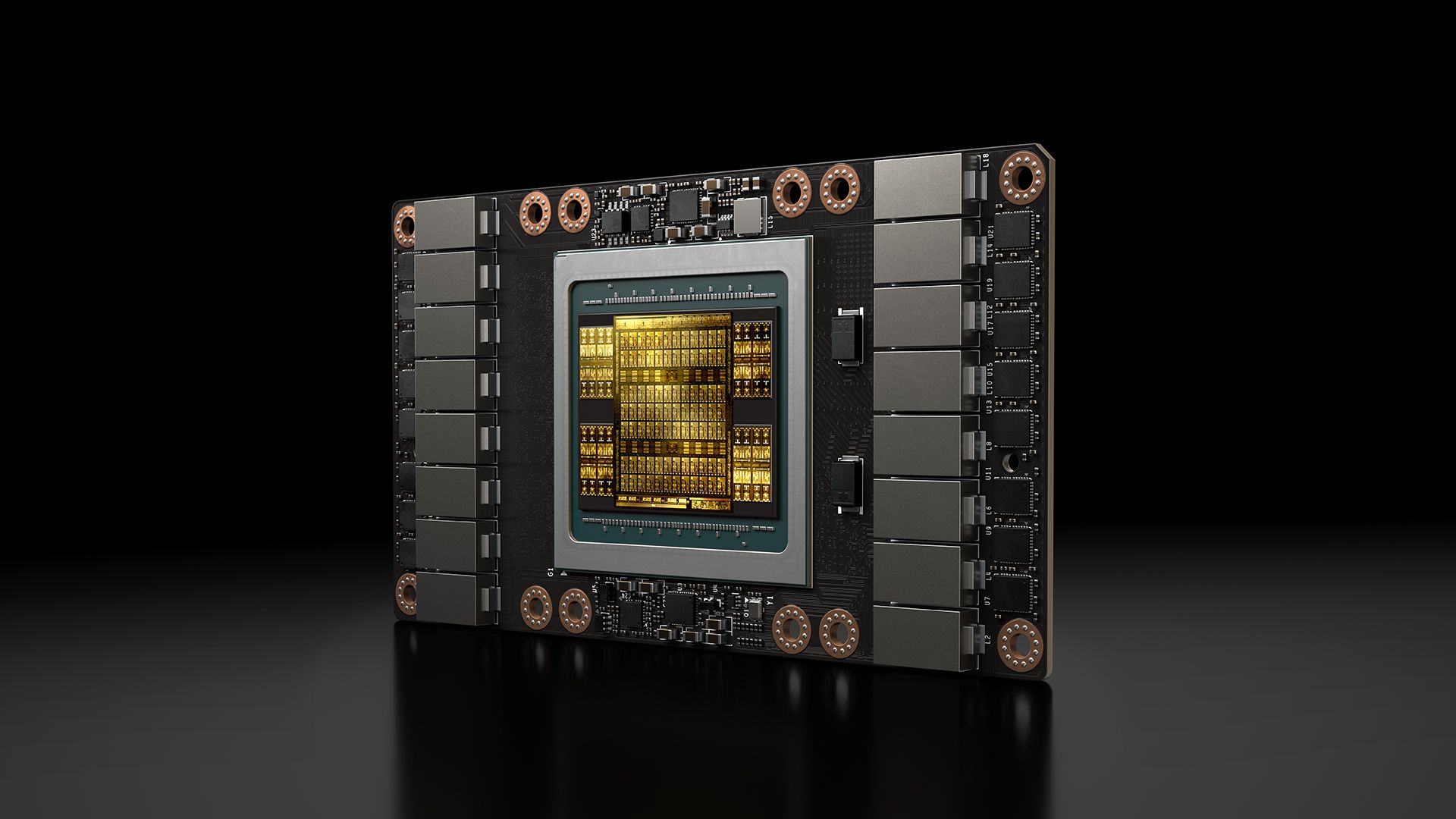

Fox2Fox2 Admin said: Turns out, Nvidia's older Turing-era V100 AI GPU is still pretty capable today, even with just 16GB of VRAM. A YouTuber got his hands on the SMX variant for just $100, converted it to a PCIe x16 interface for another $100 with an adapter, and got some pretty impressive results across AI inference and NVR benchmarks. $200 'socketed' Nvidia AI GPU for servers hacked into a PCIe card with custom PCB and 3D-printed cooling — modded Tesla V100 SMX data center GPU ru… : Read more Minor correction to both you and article, it's an SXM2 Mezzanine connector. SXM stands for Server PCi Express Module, 2nd generation. Usually these plug in and sit on the main board (or a carrier) almost like a CPU or a RAM stick. The actual Volta is pretty old at this point. It launched, iirc, right around the eve of the Transformer paper (Attention is all you need), like 2017/2018. It quickly became apparent that the market needed GPGPUs with significantly more VRAM than 32GB max per card and better matmul capabilities, causing Nvidia to rush to market multiple options in the coming years. So you had significant buy-in for these cards from data centers only to replace them as soon as they bought them. That flooded the market with these and cratered the price to sub 1000 for the 32GB SXM2 model. In the following few years you saw Ampere get rushed out, iirc the actual release of the A series was fairly staggered and there was an intergenerational increase in VRAM until the hopper replaced them. Last bit, another technical nitpick. Turing refers to the gaming and professional cards that came out in 2018. Volta is technically it's own thing. Reply

gaspoweredcat This isn't news at all, the v100 has always been there and seemed good value as the hbm made it crazy fast, I spent ages experimenting with the cmp 100-210 which is the mining version of the v100, I got a load at like £90 per card, fat with one card but start increasing and the cracks show. Not only that but trying to use vllm or sglang is going to land you untold headaches as they lean fairly heavily on ampere based stuff It may be useful for some but it's not a game changer for local LLM I'm afraid Reply

bit_user gaspoweredcat said: fat with one card but start increasing and the cracks show. What do you mean by this? Reply

gaspoweredcat typo, was supposed to be "fast" and the meaning is teat with the CMPs (due to their restricted pcie bandwidth) and pretty quickly with the v100s youll hit the ceiling of the transfer speed between cards and it gets SLOW, 1x cmp100-210 is crazy fast, 2 is sort of accepable-ish, 3 and things start crawling, realistically for larger multi card setups you need nvlink etc really, its passable with the v100s granted you dont mind being stuck on llama.cpp and having no ampere features like FAv2 etc Reply

bit_user gaspoweredcat said: typo, was supposed to be "fast" and the meaning is teat with the CMPs (due to their restricted pcie bandwidth) and pretty quickly with the v100s youll hit the ceiling of the transfer speed between cards and it gets SLOW, 1x cmp100-210 is crazy fast, 2 is sort of accepable-ish, 3 and things start crawling, realistically for larger multi card setups you need nvlink etc really, its passable with the v100s granted you dont mind being stuck on llama.cpp and having no ampere features like FAv2 etc Cool. Thanks! Did you try any fp64 stuff? Reply

bit_user BTW, anyone who wants a video output and integrated fan can get a GV100. That's the workstation version, which currently seems to run about $1200 and up, for 32 GB. When they were new, I think they cost about $10k. And, there's the Titan V, which has only 12 GB and just 3/4ths of the V100's memory bandwidth, but at least the compute units on the chip aren't nerfed. Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/pc-components/gpus/SPONSORED_LINK_URL

- https://www.tomshardware.com/pc-components/gpus/usd200-nvidia-server-ai-gpu-hacked-into-a-pcie-card-with-custom-pcb-and-3d-printed-cooling-modded-tesla-v100-smx-gpu-turing-data-center-card-runs-ai-llms-and-is-more-efficient-than-many-modern-midrange-offerings-in-ai-inference#main

- https://www.tomshardware.com/subscription

- Ukraine’s new AI-guided laser destroys Shahed suicide drones in seconds from 3.1 miles away — also useful for demining operations, trailer-mounted Tryzub system

- 2TB Samsung 9100 Pro SSD and 32GB of Corsair Vengeance RAM is just $363 in this staggering Newegg 9850X3D bundle — $558 off this $1,200 AM5 haul includes an X87

- AI data center developers target rural territory to bypass city construction bans and regulations — rural locations allow sites to bypass city council approvals

- Ukraine’s new AI-guided laser destroys Shahed suicide drones in seconds from 3.1 miles away — also useful for demining operations, trailer-mounted Tryzub system

- Louis Rossmann tells 3D printer maker Bambu Lab to ‘Go (Bleep) yourself’ over its threatened lawsuit against enthusiast — Right to Repair advocate offers to pay

Informational only. No financial advice. Do your own research.