Note that the video Intel Foundry posted on X shows two conceptual designs: a 'mid-scale' one featuring four compute tiles and 12 HBM, and an 'extreme' one with 16 tiles and 24 HBM5 stacks, which our story focuses on. Even the mid-scale design is fairly advanced by today's standards, but Intel can produce it today.

As for the extreme concept, this may emerge toward the end of the decade, when Intel has perfected not only Foveros Direct 3D packaging technology but also its 18A and 14A production nodes. Being able to produce such extreme packages towards the end of the decade will put Intel on par with TSMC, which plans something similar and even expects at least some customers to use its wafer-size integration offerings in circa 2027 – 2028.

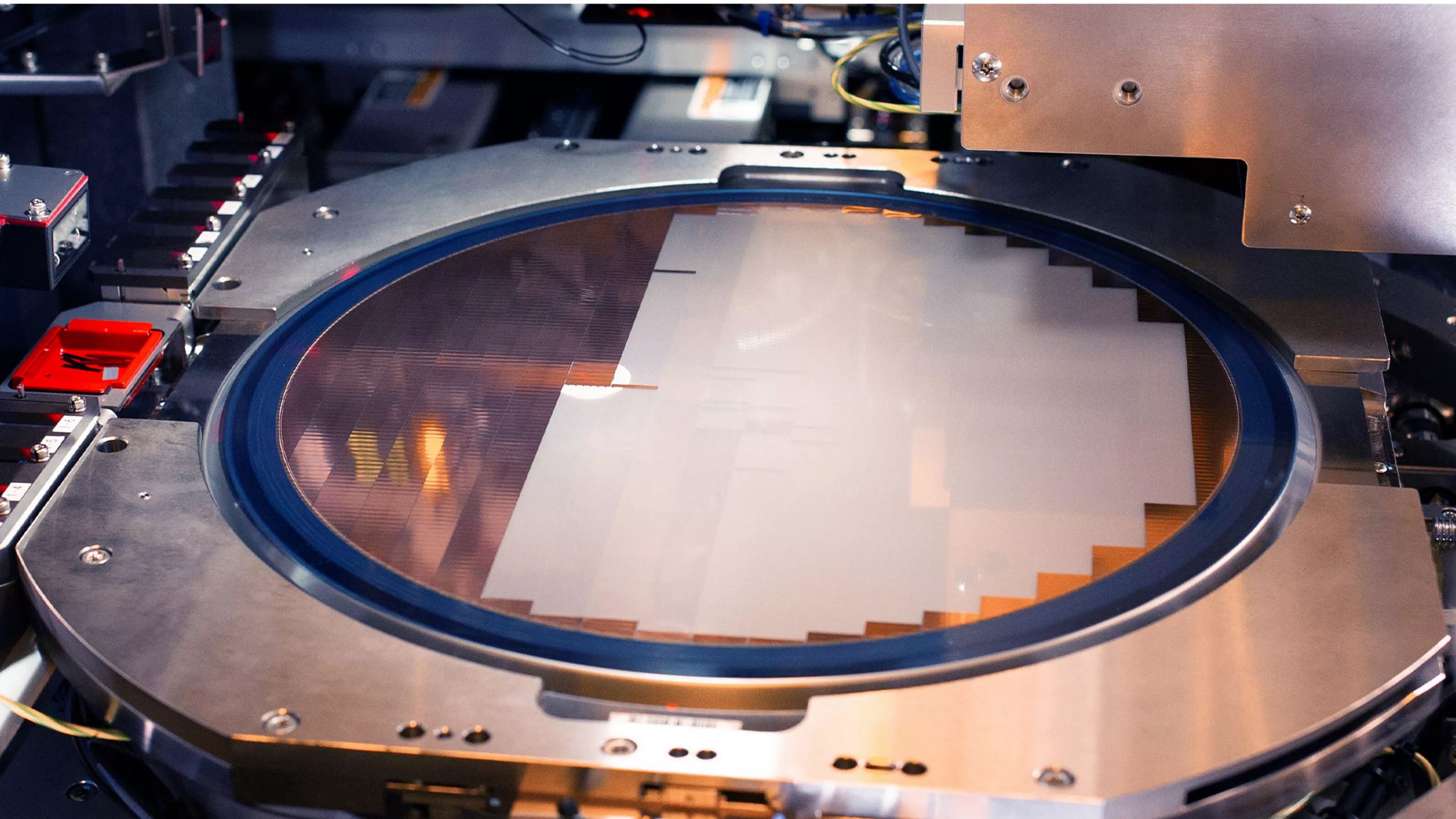

Making the extreme design a reality in just a few years is a significant challenge for Intel, as it must ensure the components do not warp when attached to motherboards and do not deform even with minimal tolerances due to overheating after prolonged use. Beyond that, Intel (and the whole industry) will need to learn how to feed and cool monstrous processor designs the size of a smartphone (up to 10,296 mm^2) that will have an even larger package, but that's a different story.

Follow Tom's Hardware on Google News , or add us as a preferred source , to get our latest news, analysis, & reviews in your feeds.

Anton Shilov Social Links Navigation Contributing Writer Anton Shilov is a contributing writer at Tom’s Hardware. Over the past couple of decades, he has covered everything from CPUs and GPUs to supercomputers and from modern process technologies and latest fab tools to high-tech industry trends.

TechieTwo Intel is just trolling for customers when they can't even get their act together on their own CPUs. Reply

TerryLaze TechieTwo said: when they can't even get their act together on their own CPUs. This is about (AI) GPUs…. CPUs ,and your opinion on them, are completely irrelevant to this article. Go ahead and write a few paragraphs on how terrible the GPUs are, maybe that will convince multi billion international companies to not choose intel fabs……. Intel was the first company to build an explicitly disaggregated chiplet design, comprising 47 chiplets, with its Ponte Vecchio compute GPU for AI and HPC applications. This product still holds the record for the most populous multi-tile design, Reply

bit_user The article said: Making the extreme design a reality in just a few years is a significant challenge for Intel, as it must ensure the components do not warp when attached to motherboards and do not deform even with minimal tolerances due to overheating after prolonged use. Beyond that, Intel (and the whole industry) will need to learn how to feed and cool monstrous processor designs the size of a smartphone This. Yield and defect management are going to be key, for something like this. I think you basically have to anticipate and handle some of the dies (or their joints) failing, in the field. Otherwise, the field failure rate will likely be way too high. Reply

bit_user TerryLaze said: Go ahead and write a few paragraphs on how terrible the GPUs are, maybe that will convince multi billion international companies to not choose intel fabs……. Intel was the first company to build an explicitly disaggregated chiplet design, comprising 47 chiplets, with its Ponte Vecchio compute GPU for AI and HPC applications. This product still holds the record for the most populous multi-tile design, Ponte Vecchio was so late that Intel had to pay a penalty for it delaying the big supercomputer that was its marquee design win. Worse, it reportedly had yields of less than 10%, which goes to one of my points in the prior post. Granted, that's because they had no ability to test the individual chiplets before assembly. But, the unit highlighted in the article has way more complexity, is made on even smaller nodes, and will likely be pushed way harder than Ponte Vecchio ever was. So, field failures of individual chips & stacks are going to be a live issue, in products of this sort. Reply

thestryker bit_user said: Ponte Vecchio was so late that Intel had to pay a penalty for it delaying the big supercomputer that was its marquee design win. Worse, it reportedly had yields of less than 10%, which goes to one of my points in the prior post. Granted, that's because they had no ability to test the individual chiplets before assembly. But, the unit highlighted in the article has way more complexity, is made on even smaller nodes, and will likely be pushed way harder than Ponte Vecchio ever was. So, field failures of individual chips & stacks are going to be a live issue, in products of this sort. I don't think it'd be crazy to say that Intel learned more about packaging from Ponte Vecchio than everything else they've done. There haven't been any reports regarding failure rates so I doubt there's anything beyond standard expectations. I don't believe they ended up clocking them as high as planned though which is likely due to improving long term reliability. From what we've seen so far with interconnected chips the interconnects themselves don't seem to be a failure point. Of course the chips being much larger does mean there's just flat out more to fail. I'd like to think that anyone leveraging these huge interconnected designs would build in a way to keep operating in a degraded state whenever possible to mitigate failures. Reply

bit_user thestryker said: From what we've seen so far with interconnected chips the interconnects themselves don't seem to be a failure point. Of course the chips being much larger does mean there's just flat out more to fail. I think the launch problems with Blackwell B200 are a good reminder of the sorts of things that can happen with advanced packaging and large dies: "Nvidia's Blackwell B100 and B200 GPUs link their two chiplets using TSMC's CoWoS-L packaging technology, which relies on an RDL interposer equipped with local silicon interconnect (LSI) bridges (to enable data transfer rates of about 10 TB/s). The placement of these bridges is critical. However, a supposed mismatch in the thermal expansion properties between the GPU chiplets, LSI bridges, RDL interposer, and motherboard substrate caused the system to warp and fail, and Nvidia reportedly had to modify the top metal layers and bumps of the GPU silicon to enhance production yields." Source: https://www.tomshardware.com/tech-industry/artificial-intelligence/nvidias-jensen-huang-admits-ai-chip-design-flaw-was-100-percent-nvidias-fault-tsmc-not-to-blame-now-fixed-blackwell-chips-are-in-production Reply

thestryker bit_user said: I think the launch problems with Blackwell B200 are a good reminder of the sorts of things that can happen with advanced packaging and large dies Yeah I wondered if this was a cost of pushing to get hardware out so quickly. It was a problem that really should have been caught during a normal QA cycle. Reply

bit_user thestryker said: Yeah I wondered if this was a cost of pushing to get hardware out so quickly. It was a problem that really should have been caught during a normal QA cycle. That was caught before they did the volume ramp. It was the reason for the launch being delayed, I think because you don't expect to have such major problems arising and it took more time to address than the typical issues you'd fix with something like a metal layer change. Reply

TerryLaze bit_user said: Ponte Vecchio was so late that Intel had to pay a penalty for it delaying the big supercomputer that was its marquee design win. Worse, it reportedly had yields of less than 10%, which goes to one of my points in the prior post. Granted, that's because they had no ability to test the individual chiplets before assembly. But, the unit highlighted in the article has way more complexity, is made on even smaller nodes, and will likely be pushed way harder than Ponte Vecchio ever was. So, field failures of individual chips & stacks are going to be a live issue, in products of this sort. Hey, you did it! With so much undisputable evidence potential customers aren't going to do any actual wafer yield tests on the actual node with any actual designs and will instead just not use intel…Great job! Reply

bit_user TerryLaze said: Great job! Thank you! Reply

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/semiconductors/SPONSORED_LINK_URL

- https://www.tomshardware.com/tech-industry/semiconductors/intel-displays-tech-to-build-extreme-multi-chiplet-packages-12-times-the-size-of-the-largest-ai-processors-beating-tsmcs-planned-biggest-floorplan-the-size-of-a-cellphone-armed-with-hbm5-14a-compute-tiles-and-18a-sram#main

- https://www.tomshardware.com

- Starlink satellite pictured ‘tumbling’ after recent ‘anomaly’ in space — it will be incinerated when it enters the Earth’s atmosphere in a few weeks

- Microsoft promises to nearly double Windows storage performance after forcing slow software-accelerated BitLocker on Windows — new CPU hardware-accelerated cryp

- How China’s control of battery supply chains is becoming a critical risk for U.S. military power and AI initiatives — reducing reliance will take nearly a decad

- This $299 Asus 4K gaming monitor is back to its lowest ever price — save $170 on this budget-friendly IPS display with fast 160Hz refresh rate and Nvidia G-Sync

- 3 Ways NVIDIA Is Powering the Industrial Revolution

Informational only. No financial advice. Do your own research.