Nvidia refutes reports of HBM4 mass production delay, production 'on track' for the second half of 2025

Looking at the backsides of HBM4 and HBM3E, the difference between them becomes obvious, even taking the general pin count aside. HBM3E and HBM4 feature the same dimensions/footprint, so the backside indeed shows a much denser and more uniformly packed BGA pin field with noticeably higher bump density across the entire footprint. This is not particularly surprising as the jump from a 1,024 to a 2,048-bit I/O takes its obvious toll and requires substantially more signal bumps as well as additional power and ground pins to support higher bandwidth and tighter signal integrity margins. By contrast, HBM3E's backside has a sparser bump layout with more visible separation between regions, which is consistent with its 1,024-bit interface and lower aggregate I/O demand.

Meanwhile, HBM4's power delivery and ground contacts are also visibly different compared to the less advanced type of memory. Perhaps HBM4 allocates a larger fraction of its backside area to power and ground bumps; they are arranged more evenly across the package, something that could reduce noise and IR drop at data rates that are as high as 8 GT/s per standard or 10 GTs, given the listed capability.

Yet, we may be speculating here purely based on the fact that HBM3E features fewer power pins and shows clearer zoning between I/O and power regions. In fact, even without precise specs, the backside alone shows that HBM4 is designed for much higher I/O bandwidth and power delivery requirements.

In any case, these HBM4 modules use custom DRAM dies manufactured on the proven 1b-nm (5th-generation 10-nm-class) process to wed a large DRAM die with low defect density, reduced variability, and eventually high yield, something that makes them cheaper, yet it is hard to estimate how that lower cost could translate to the end user.

Get Tom's Hardware's best news and in-depth reviews, straight to your inbox.

Key considerations

- Investor positioning can change fast

- Volatility remains possible near catalysts

- Macro rates and liquidity can dominate flows

Reference reading

- https://www.tomshardware.com/tech-industry/SPONSORED_LINK_URL

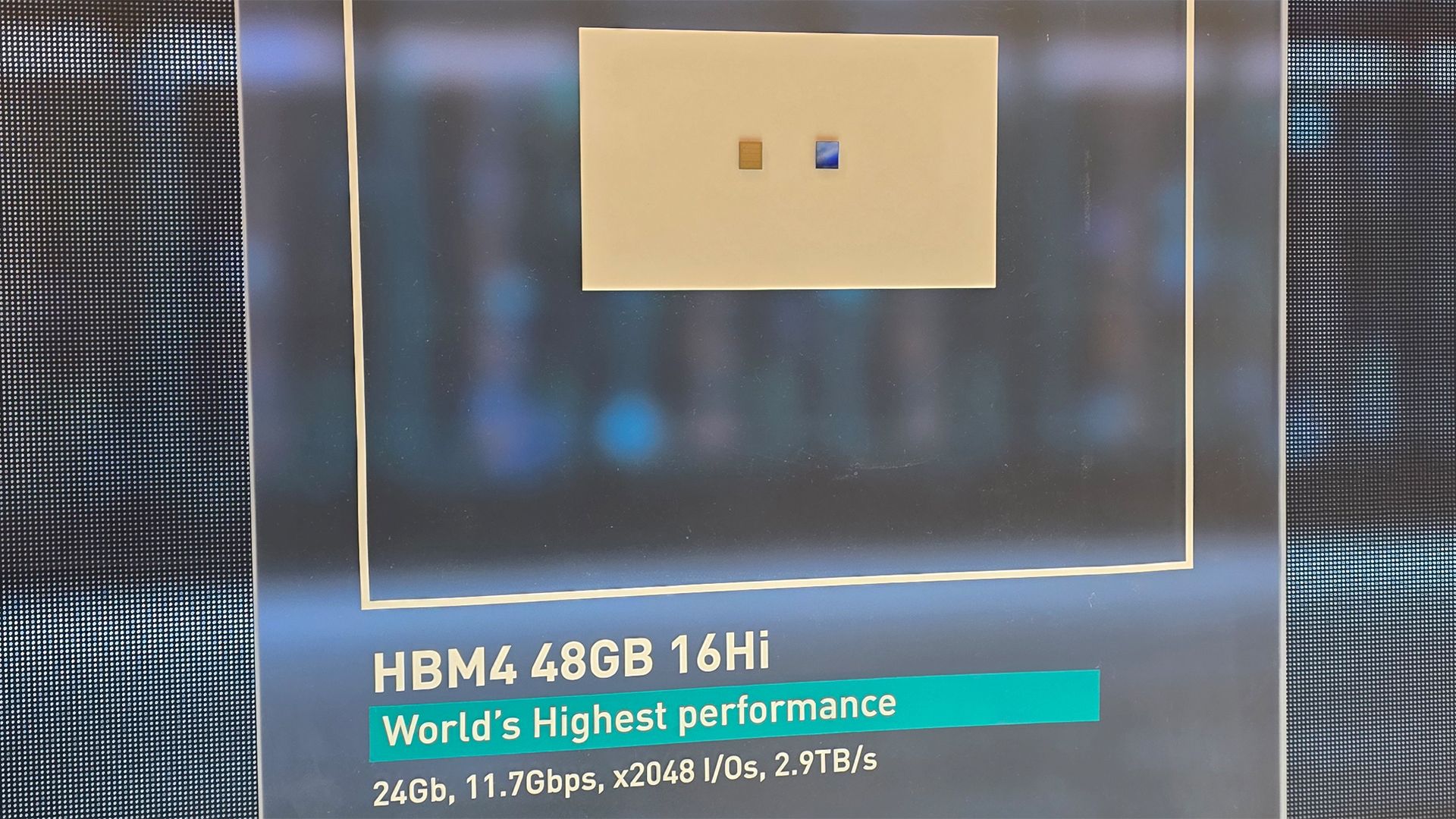

- https://www.tomshardware.com/tech-industry/sk-hynix-shows-16-hi-hbm4-memory-for-ai-accelerators-48-gb-at-10-gt-s-over-a-2-048-interface#main

- https://www.tomshardware.com

- NVIDIA Brings GeForce RTX Gaming to More Devices With New GeForce NOW Apps for Linux PC and Amazon Fire TV

- Asus SFF-ready RTX 5070 falls below MSRP — deal saves $70 and gives you a GPU for small PC builds

- AI Copilot Keeps Berkeley’s X-Ray Particle Accelerator on Track

- NVIDIA RTX Accelerates 4K AI Video Generation on PC With LTX-2 and ComfyUI Upgrades

- Trump says that AI tech companies need to ‘pay their own way’ when it comes to their electricity consumption — says major changes are coming to ensure Americans

Informational only. No financial advice. Do your own research.